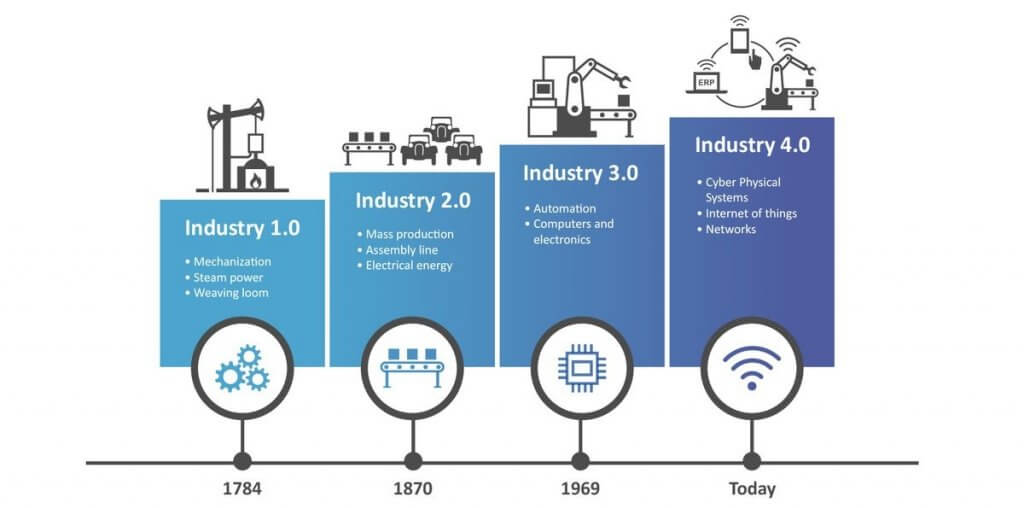

Have you ever heard about the term industrial revolution? Right after the era of mechanization, electrification, and digitization we are currently in the 4th revolution called Industry 4.0. It’s time to bring all technologies together.

The industrial revolution

History

Can you imagine that it all started when the steam engine was invented and introduced?

- The First Industrial Revolution was marked by a transition from hand production methods to machines in 1760.

- Year 1871 was beginning of the second revolution and resulted from installations of extensive railroad and telegraph networks. Increasing electrification allowed for factories to develop modern production lines.

- With the 20th century industry shifts from mechanical and analogue electronic technology to digital electronics starting Information Age.

- Finally, the ongoing automation of traditional manufacturing and industrial practices, using modern smart technology become The Fourth Industrial Revolution.

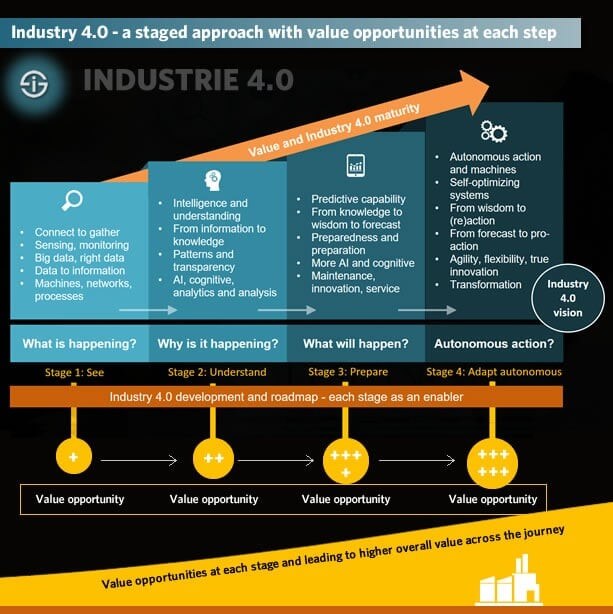

Large-scale, machine-to-machine communication and the internet of things are integrated for increased automation, improved communication and production of smart machines that can analyze and diagnose issues without human intervention.

Present day

As a member of this kind of project, I will help you to explore the world of modern automated factory. There will be some overall technology stack that is used, but we will talk about the interesting possibilities of today’s technology. Most importantly – we will not talk about the future, but about solutions that have been here for quite some time and have already been tested.

We have to find out:

- Where our starting point is?

- What we want to achieve?

- What technologies will help us?

Let’s suppose that we have factory of today – mostly automated, but a lot of human intervention is needed to get good results. The goal of the project is to create a platform that will manage and communicate with:

- factory and machine sensors,

- actuators,

- process control systems.

Access to raw and processed data to all authorized parties, both on-site and remotely, enables creation and running of complex modeling and simulation processes. Only if remote maintenance and optimization is possible then we can introduce predictions or self-optimization algorithms.

General technical

Edge Device

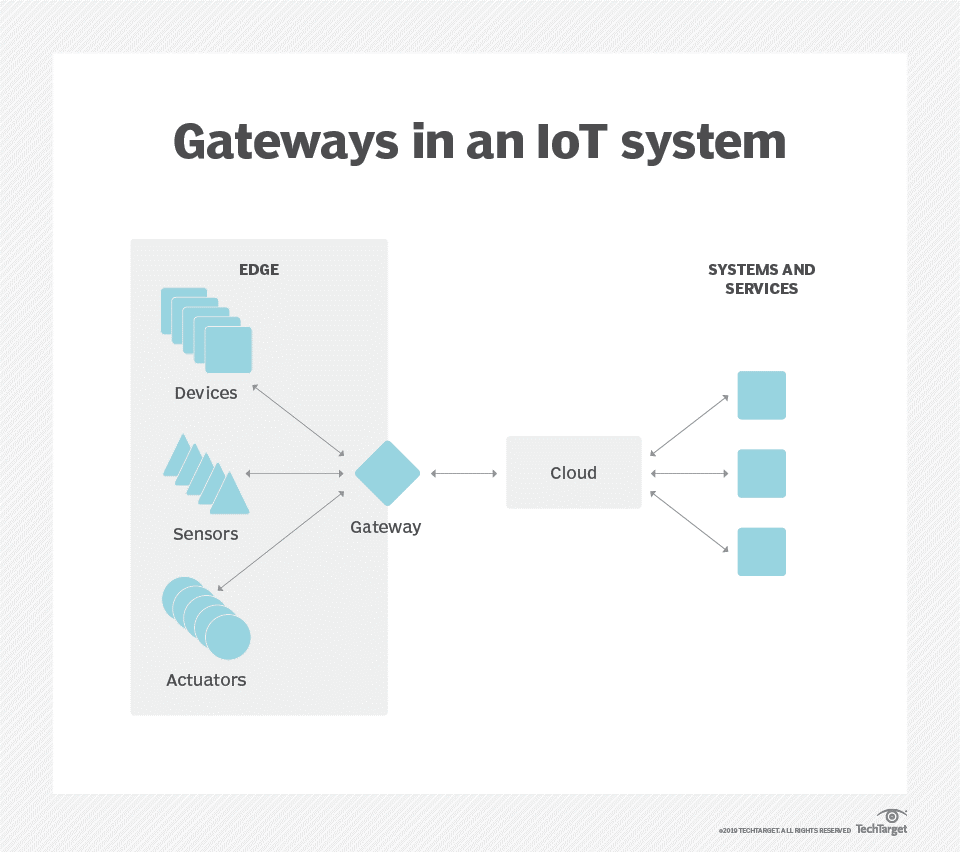

Biggest challenge is to interconnect the local infrastructure. To begin with, we need a computing platform, which can also be referred to as “edge gateway” (because it is on the edge of two worlds) that allows us to collect, analyze and act on real-time data.

It is called Edge Device and offers out-of-the-box support for a wide range of native drivers for devices like PLCs, controllers and sensors, so all referred industrial assets can be connected. In most cases, it will be an industrial workstation with OS based on Linux installed in the server room.

We can access Edge with a software-defined private network anywhere, using web interface, terminal interface (when we do not have access to a network system) or manipulate data programmatically via REST APIs. Edge is built with security at every step and offers certificate management, authentication and encryption to protect all communication on transport layer (using SSL and TLS).

Once the device data is collected, edge device normalizes it and enables data sharing with cloud.

Communication protocol

How to connect to all this equipment from over three hundred companies, including ABB, Matrikon, Siemens, Yokogawa? Let’s suppose that we have a protocol which is focused on communicating with industrial hardware and systems for data collection and control…

Fortunately, there is OPC Foundation! An organization whose task is to provide wide opportunities for cooperation in the field of automation by creating and maintaining an open communication standard called OPC Unified Architecture (OPC UA).

This machine-to-machine protocol is a result of many years of cooperation of industry leaders. They created richer and more complete, service-oriented open standard for information exchange in process management in a secure way. So, we can be sure that we will easily collect data in one place.

Cloud

As previously indicated, edge device gets data from the offline environment. How to connect devices easily and securely to the cloud? For the purposes of this article, I will describe the Amazon Web Services integration, but every large cloud vendor like Microsoft Azure or Google Firebase offers its own, similar services.

Sensors that provide data in the machine-to-machine communication process can be treated as the Internet of Things variables, but on a larger scale. It’s like having several temperature sensors connected to one central unit at home. The difference is that here instead of 10 pieces we have thousands.

AWS IoT Core lets us connect billions of IoT devices and route trillions of messages to AWS services without managing infrastructure. We can connect, manage, and scale devices easily and reliably without provisioning or managing servers. After choosing our preferred communication protocol, including MQTT, HTTPS or LoRaWAN, secure device connections are established with mutual authentication and end-to-end encryption.

The most common is MQTT. A standard messaging protocol for IoT designed as an extremely lightweight messaging transport. In this way the data can be easily stored in Amazon Simple Storage Service (AWS S3) and queried from AWS Athena in an interactive service that makes analyze data easy using standard SQL.

Before we send collected data to the cloud, we need to format it. It can’t be only raw values. It would be great to validate the input data and make some calculations to map a property between different numeric ranges. For example, a sensor reading in the range 0 – 1023 should be mapped to a voltage range of 0 – 5. Edge Device operates with browser-based tool for integrating IoT devices with applications. It is called Node-RED and principle of operation is simple – use JSON, so we can do whatever we like by visually combining different blocks that perform specific functions.

Advantages of sending data to the cloud

How to achieve the goal of the project and create a platform that will communicate with and manage devices? Collected variable values are sent to the cloud and from there the data can be retrieved for many applications. To achieve it, we can use stream analytics to monitor factory operation or even do the advanced analytics to identify issues upfront, which is called predictive maintenance.

Reports and control devices in real time

Apart from the obvious archiving of the sensor readings, we can easily prepare daily, weekly or monthly reports. This allows us to track factory performance and generate energy reports. It is crucial, if our intention is to optimize maintenance costs and maximize revenues.

In addition, we can make calculations and control devices in real time. Yes! Communication is flowing both ways. Using algorithms for this purpose we will adjust the equipment settings to make the production as efficient as possible.

Scalability

Scalability is one of the driving reasons to migrate to the cloud, especially when there may be more than one edge device. It is always important to have the right technologies that allows scalability. Infrastructure as a code and APIs can help to reach that goal efficiently.

Instead of spending hours and days setting up physical hardware, teams can focus on other tasks, because IT Administrator can easily integrate devices that are available without delay – often with just a few clicks. At a later stage, before we start implementing new installations, easy access to these devices should be considered. Stable remote maintenance, management and configuration automation are important for efficient development. Especially when Linux-based systems operate efficiently on computers with lower power, which allows for the creation of many portable solutions.

An integrates

A great example is the usage of smart camera, which is a programmable imaging platform. It integrates image sensor, high-speed CPU, FPGA image pre-processing unit, mass storage and Ethernet interface. With industrial machine vision system which includes acquirement, processing and system communication we can create a robot that will drive around the field and take pictures of plants. Combining it with information about its location, soil and meteorological data in that exact place means we get everything we need to analyze our agriculture.

It is the same when we consider video streams. It was never easier to make images from cameras mounted on production lines available via the Real Time Streaming Protocol. RTSP is a network control protocol designed for use in communications systems to control streaming media servers. It is used for establishing and controlling media sessions between endpoints to capture, process, and store media for playback, analytics, and machine learning.

Docker container sends streaming to AWS Kinesis Video Streams and create a very precise vision systems for quality control. Containerized software will always run the same regardless of infrastructure and will run in parallel to support latency-critical applications right at the factory.

Conclusion

To sum up, the key aspect is to bring technologies all together so that they run as automation at a level even higher than the production lines themselves. This is what allows the use of complex algorithms, machine learning, artificial intelligence, and many others to make production cheaper, more efficient, and even energy-saving and ecological.

I hope that the topic of Industry 4.0 caught your interest, and you want to find out more about the solutions that are implemented in modern factories.

***

If you want to find out what Jacek thinks about his tasks and how everyday work at the Embedded Competence Center looks like, watch the video:

Leave a comment