The days when artificial intelligence was used solely for code auto-completion are behind us. In 2026, AI agents like OpenAI’s GPT-5.4 and Anthropic’s Claude Opus 4.7 act as fully-fledged programming assistants. They can independently analyze bugs, refactor entire systems, and ensure application security.

How do you choose the right model for a specific task? We will look at their capabilities, costs, and ecosystems to help you make that decision.

The most popular AI models for developers in 2026

Today’s programming tools ecosystem is dominated by two models that best illustrate the shift in software development:

- GPT-5.4

- Claude Opus 4.7

At first glance, their capabilities seem similar. Both models can efficiently generate code, analyze complex problems, perform refactoring, and support debugging.

GPT-5.4 is the latest offer from OpenAI. This model combines Codex’s proven capabilities with advanced reasoning, native computer use, and a massive context window (source). It represents an evolution of the earlier GPT-5.3 Codex, which still works great as a cheaper alternative for purely terminal-based tasks.

Claude Opus 4.7 was introduced by Anthropic as the most capable publicly available model for advanced programming and analytical reasoning (source).

So where does the real difference lie? It lies in effectiveness for specific types of tasks, the tool ecosystem, and the quality-to-cost ratio.

Currently, both models are widely available via API and integrated with the most popular tools, such as Cursor, Windsurf, and GitHub Copilot. As of February 2026, both OpenAI and Anthropic solutions operate as agents in GitHub Copilot (source).

The market division, therefore, is not about where a given model is available, but rather in which tool ecosystem it operates most effectively:

- OpenAI offers deep integration with GitHub Copilot (both in the IDE and during PR review), has its own Codex IDE, extensions for popular editors (VS Code, Cursor, Windsurf), and ready-made libraries for CI/CD. This ecosystem is deeply embedded in the daily developer pipeline.

- Anthropic offers Claude Code (a CLI agent for terminal work), and its models are a frequently chosen option by Cursor users. Their approach is decidedly more agent-centric and API-first.

Choosing a model often comes down to deciding which ecosystem you want to work in.

How have these models changed?

| Previously | Currently | |

| OpenAI (5.3 Codex → 5.4) | Generated code based on a prompt, worked on single files. | GPT-5.4 combines coding with native computer use, reasoning, and a massive context (1.05M tokens). |

| Opus (4.6 → 4.7) | Generated answers well but had limitations with more complex analyses. | Conducts multi-step reasoning, maintains the context of large systems, and generates and refactors code at a high level. Version 4.7 brought a jump on SWE-bench Pro from 53.4% to 64.3%. |

In short, both models have advanced from the role of mere “snippet generators” to the level of fully-fledged programming agents. Today, they differ in how they approach solving a problem, not whether they can solve it at all.

How does Opus 4.6 differ from 4.7?

Released in mid-April, Opus 4.7 introduces several changes that translate into better results in selected scenarios in practice:

| Area | Opus 4.6 | Opus 4.7 | Change |

| SWE-bench Pro (coding) | 53.4% | 64.3% | +20% |

| CursorBench | 58% | 70% | +12pp |

| OfficeQA Pro (document reasoning) | baseline | −21% errors | significant improvement |

| Vision (max resolution) | 1.15 MP | 3.75 MP | 3× more |

Among the most important new features in version 4.7 are:

- xhigh effort level – a new parameter created specifically for tasks requiring the deepest reasoning.

- Task budgets (beta) – a feature allowing the model to manage its token budget in agentic loops.

- New tokenizer – a small note here: it increases token usage by about 35%, which realistically raises operational costs while maintaining the existing pricing ($5/M input, $25/M output).

Important practical caveat: Opus 4.7 adheres much more strictly to instructions (i.e., stricter instruction-following). This means that prompts that worked perfectly in version 4.6 may require minor adjustments. It is worth testing them before a full migration.

How was GPT-5.4 created?

It’s worth clarifying one thing: GPT-5.4 is not simply a new version of the Codex model. It is a comprehensive, universal model that absorbed all the programming skills of its predecessors, and then expanded them with native computer use, advanced research, and powerful context.

To better understand this, let’s look at the evolution of OpenAI tools:

- GPT-5.2-Codex (late 2025) – the first standalone agent in the company’s lineup.

- GPT-5.3-Codex (February 2026) – an update: a model 25% faster, performing excellently in the terminal (77.3% in Terminal-Bench), and the first to receive the highest rating in cybersecurity (source).

- GPT-5.4 (March 2026) – OpenAI combined Codex’s programming precision with general reasoning and a context window accommodating up to 1.05M tokens (source).

Importantly, the older GPT-5.3 Codex has not disappeared from the market. It remains available as the most affordable solution for purely terminal-based tasks and CLI work. If your daily workflow relies heavily on the console, scripts, and automation, Codex 5.3 will still hit the bullseye.

Work characteristics: GPT-5.4 and Claude Opus 4.7

Although both models can easily handle most daily programming tasks, their approaches to work differ. And this is what determines their effectiveness in specific scenarios.

| Area | GPT-5.4 | Opus 4.7 |

| Default style | Quickly moves to implementation | Analyzes first, then acts |

| Strength | Speed, computer use, and broad capabilities | Depth of reasoning, maintaining context |

| SWE-bench Pro | 57.7% (per OpenAI) | 64.3% (per Anthropic) |

| Context | 1.05M tokens | 1M tokens |

| Computer use | Native (OSWorld 75%) | Supported (Computer Use API, vision 3.75 MP) |

| Ecosystem (deepest integration) | GitHub Copilot (PR review, Copilot in IDE), Codex IDE, CI/CD extensions | Cursor, Claude Code (CLI), API-first |

| Security | Codex Security – proactive vulnerability scanning in the pipeline | Consciously narrowed capabilities in cybersecurity (per Anthropic, relative to Mythos) |

| Approach to risk | Broad capabilities + control layers | Limiting capabilities at the source |

The last row of the table clearly illustrates the fundamental difference in the two providers’ philosophies.

OpenAI provides a model with incredibly broad capabilities but imposes external control layers on it (such as Codex Security or Trusted Access for Cyber). Anthropic chooses a different path: some potentially dangerous capabilities are narrowed down even before the model is publicly released (according to the company’s declaration, Opus 4.7’s cybersecurity capabilities were consciously limited compared to the powerful Mythos model). Both approaches make sense and affect how you ultimately design your workflow.

Simply put, the difference in their work style looks like this:

- GPT-5.4: Receives a task → immediately generates a solution → iterates if tests fail.

- Opus: Receives a task → analyzes the context → plans the approach → implements the code along with a justification.

Neither of these styles is objectively better. It all depends on the problem you are facing.

Where do these differences come from?

The different work style is not a coincidence – it stems from design decisions at the architecture level of the models themselves:

- Explicit vs. Implicit Planning. Opus tends to explicitly break down a problem into smaller steps before generating the first line of code. GPT-5.4 plans more “on the fly” – it starts writing and corrects course as it goes. As a result, Opus rarely hits dead ends on complex tasks, but it can be slower on the simplest ones. This is a classic dilemma known from distributed systems: an execution-first (optimistic) approach versus a plan-first (deliberative) approach.

- Context Management. Both models have a powerful context (around 1M tokens) and generate up to 128k output tokens. The difference lies in how they use it. Opus excels at maintaining consistency during long, multi-step interactions. GPT-5.4, on the other hand, is unrivaled when a task requires a wide range of capabilities (e.g., simultaneous coding, browser use, and research) within a single session.

- Strategy Towards Uncertainty. When data is missing, GPT-5.4 tends to generate the most probable solution. Opus will more often stop and explicitly ask for the missing information. Depending on the situation, each of these traits can be a huge advantage or an irritating flaw.

These differences translate into hard data. Opus 4.7’s score on SWE-bench Pro (64.3% per Anthropic vs 57.7% for GPT-5.4) confirms that deeper planning translates into higher code quality in complex projects.

Practical applications of models in IT projects

Let’s step down from the level of abstraction and look at specific scenarios where the choice of model makes a real difference.

When will GPT-5.4 work best?

- Mass refactoring: Changing a pattern across 200 files or migrating a large API. GPT-5.4 offers significantly higher throughput and a lower price per token, which is crucial for large-scale repetitive changes.

- Generating boilerplate: Writing repetitive tests, CRUD operations, or configuration files. Speed is what counts here.

- Tasks requiring intensive computer use: Interacting with user interfaces, testing web applications, or working with desktop tools. GPT-5.4 features native computer use integrated directly into the model (OSWorld 75%, source). Opus supports this via API, but with slightly less maturity.

- Tight integration with GitHub: Automated PR review, suggestions in Copilot, CI/CD support. OpenAI models are available by default in GitHub Copilot for business users (source).

- Security scanning: Introduced in March 2026, Codex Security is not just a feature, but a separate layer in the pipeline. It analyzes code before merging, acting like an intelligent SAST/DAST. It understands the context of changes and reduces false alarms by about 50% (source).

When should you reach for Claude Opus 4.7?

- Debugging complex problems: Race conditions, memory leaks, intermittent failures. Opus focuses on deeper analysis and maintains context exceptionally well, which is reflected in the SWE-bench Pro results.

- Architectural decisions: Evaluating trade-offs, choosing the right design pattern, or analyzing the impact of a single change on the entire system. Here, deeper reasoning definitely pays off.

- Working with a large, interconnected context: Analyzing many interdependent files and understanding the data flow across multiple application layers.

- Visual analysis: Opus 4.7 can analyze images with resolutions up to 3.75 MP. This is invaluable help when debugging UI, analyzing complex architectural diagrams, or reviewing error screenshots.

When more does not mean better

It’s worth remembering that deeper reasoning does not always mean a better outcome for the user. For very simple tasks, Opus can be slower and more expensive – not because it can’t handle it, but because it analyzes more than necessary. Instead of simply writing a basic endpoint, it starts considering edge cases that no one asked for. Additionally, the new tokenizer in version 4.7 increases the cost of such operations by ~35%.

On the other hand, for some pesky bugs, GPT-5.4’s iterative approach can be surprisingly effective. The model instantly generates several fix variants that you can quickly verify using tests.

Both models excel when it comes to:

- Daily coding (new features, simple fixes).

- Code review (both efficiently catch problems).

- Explaining and documenting existing code.

The new developer workflow

What does working with these models look like in practice? Let’s imagine a classic problem: sudden timeouts on the /api/orders endpoint.

In the past (without AI):

- The developer analyzes logs.

- Look for the cause in the code.

- Implements a fix.

- Writes tests and deploys the change.

Today (with AI) – leveraging each model’s strengths:

- Specify: You write down a short specification of the problem (goal, acceptance criteria, constraints) – in a SPEC.md, a Jira issue, or a task description.

- You provide Opus with logs from the last 24 hours along with the specification → Opus identifies that the problem only occurs for orders with more than 50 items. It points to a lack of pagination and an N+1 query issue.

- You pass this analysis to GPT-5.4 → the model refactors the repository, adds pagination, eliminates the N+1 issue, and generates an integration test.

- Codex Security scans the fix for vulnerabilities (e.g., IDOR – Insecure Direct Object Reference, SQL injection) → confirms the security of this change.

- You perform the final review → check if the solution breaks the API contract, run tests, and deploy.

What actually changed in the code

Based on Opus’s analysis, GPT-5.4 generated a fix. The diff itself is short but requires understanding JPA, maintaining the API contract, and writing a regression test – which is exactly what we expect from an AI assistant.

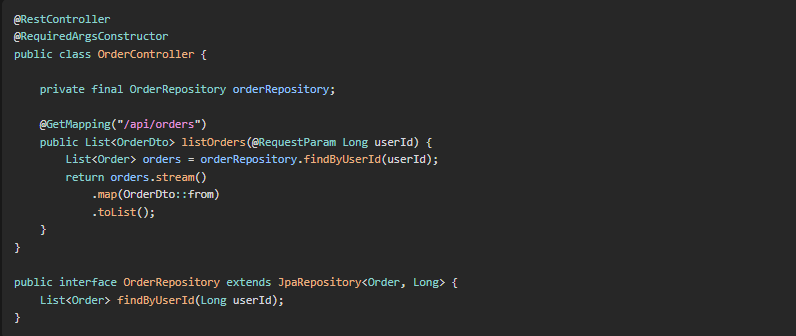

Before:

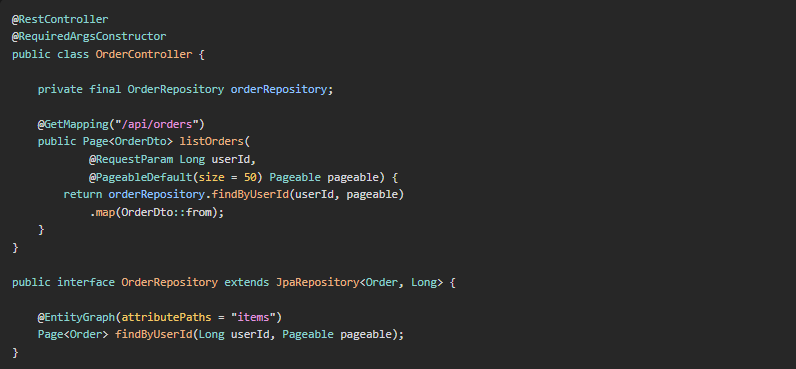

After:

Two key changes were introduced: the @EntityGraph annotation eliminates the N+1 problem (a single query with JOIN FETCH), and the Pageable object introduces pagination, limiting the payload. It’s worth remembering that in a real project, there is also a rollback plan, post-deployment monitoring, and team communication – AI does not replace these layers, but merely shortens the path between diagnosis and the fix code.

Prompts used in this scenario

Here is what you actually type into the model at each stage of working with AI.

Step 1 – Specify (requirements specification)

Before you even engage the model, you write down the requirements, acceptance criteria, and constraints as a separate artifact – a SPEC.md file in the repository, a Jira issue, or a short block in the task description. This is the contract between you and the AI.

- Goal: The /api/orders endpoint should respond in < 200 ms for orders with ≤ 100 items.

- Requirements: Maintain backward compatibility of the API contract, write an integration test for pagination > 50 items.

- Constraints: We do not change the database schema in this iteration, and we do not introduce a cache.

This is the core of the spec-driven development approach. You provide the specification to the model in Step 2 along with the logs – the plan is then formed not based on “what the model thinks you want”, but “what you explicitly wrote down”. As a result, the model hallucinates intent much less frequently.

Step 2 – Opus 4.7 in Ask/Plan mode (analysis)

I am attaching the spec (SPEC.md), the last 24h of logs from the /api/orders endpoint, and the Sentry stack trace. Identify the root causes of the timeouts and propose a repair strategy aligned with the specification.

It is crucial to run this step in read-only mode (e.g., Ask mode in Cursor or Plan mode in Claude Code). This forces the model to focus solely on diagnosis and solution planning, preventing premature file modifications. The text request “do not write code yet” is sometimes ignored by models – tool mode technically guarantees this.

Step 3 – GPT-5.4 in Agent mode (implementation)

Implement the fix according to this strategy: [paste Opus’s output]. Add pagination (limit 50), eliminate N+1 via eager loading, and write an integration test for an order with 100 items.

At this stage, we switch to Agent mode (or its equivalent, e.g., accept edits in Claude Code). Speed and execution count here – GPT-5.4 takes the ready strategy and instantly turns it into ready code and tests.

Step 4 – Codex Security (verification)

Scan the diff for IDOR, SQL injection, and permissions. Pay special attention to the new parameters ?page= and ?size=.

Specifying concrete attack vectors narrows the scope of scanning and reduces the number of false alarms (false positives). Instead of a generic “check security,” we get a targeted analysis.

Preview: a full case study – with logs, model outputs, and cost measurements – will be described in the next article in the series.

Could Opus be used exclusively for both steps? Yes. Would GPT-5.4 handle the analysis on its own? Probably as well. However, it is precisely matching the model to the specifics of the task that allows you to achieve an optimal quality-to-time-and-cost ratio.

The developer ceases to be solely responsible for implementation – they become the orchestrator of AI work:

- Selects the right model for the task.

- Controls the flow of information between different agents.

- Manages risk (security, regressions, hallucinations).

- Decides when AI can act autonomously and when it requires supervision.

Implementing AI in a team

Looking from a systems architect’s perspective, it’s worth treating AI models like microservices with different characteristics:

- Opus 4.7 – lower throughput, higher cost, optimized for complex reasoning.

- Claude Sonnet – Opus’s cheaper sibling in the Anthropic ecosystem, good for execution and daily coding.

- GPT-5.4 – higher throughput, lower cost, native computer use, broad spectrum of capabilities.

- GPT-5.3 Codex – the most affordable option, specialized in terminal/CLI work.

- Codex Security – a specialized agent for security scanning.

This is a situation analogous to choosing between a synchronous API call and batch processing – both approaches work, but their optimal use depends on the context.

Choosing the model for the step

Opus 4.7 is worth its price, where you pay for more extensive reasoning. Where you only need execution, it’s worth considering a cheaper sibling within the same ecosystem or a competing model:

| Workflow Step | Recommendation | Why |

| Specify | any model (or no AI) | You write the specification, not the model |

| Plan / Analyze | Opus 4.7 | deeper reasoning realistically translates into plan quality |

| Implement | Claude Sonnet or GPT-5.4 | cheaper, powerful enough to execute a ready plan |

| Verify / Security | Codex Security or a specialized agent | narrow task scope, does not need a flagship model |

Why Sonnet instead of Opus for implementation?

- First, pricing – within the same generation, Sonnet is usually several times cheaper than Opus.

- Second, token usage –Opus 4.7 has a new tokenizer which (as mentioned earlier) increases the number of tokens by ~35%, and its deeper reasoning chains naturally lead to longer responses.

The result: a single implementation iteration on Opus can cost several times as much as the same iteration on Sonnet, with a marginal quality difference at the point of executing a ready plan.

In a mature work environment, AI plays three main roles:

- Decision layer – analysis, planning, risk assessment (Opus excels here).

- Execution layer – implementation, refactoring, test generation (GPT-5.4 offers higher performance and lower costs here).

- Security layer – vulnerability scanning, verification of introduced changes (Codex Security).

The architect’s task is to manage the flow between these layers. In practice, this means designing an orchestration layer. It is worth asking yourself a few key questions:

- Routing: How to automatically route requests to the appropriate model? Should the criterion be the task type, its complexity, or perhaps cost?

- Fallback: What happens when the “first choice” model returns an unsatisfactory result? Is there a mechanism for a smooth switch to an alternative?

- Decision logging: Do you record which model proposed a given solution? This is crucial for later audits and team learning.

- Quality metrics: How do you measure the effectiveness of a given model in your specific context? Do you measure time, costs, or the percentage of accepted changes?

Even just asking these questions dramatically changes how we think about building processes with AI. The architect is no longer just designing the IT system itself, but also the collaboration system between AI models.

Claude Mythos – the future of Cybersecurity in AI

When talking about AI models in 2026, it is impossible to ignore the elephant in the room: Claude Mythos Preview.

On April 7, Anthropic announced a model that is not just “another, more powerful version.” It represents an entirely new class of systems capable of conducting long, uninterrupted reasoning chains without any user intervention. According to benchmarks published by the creators, the results are impressive:

- SWE-bench Verified: 93.9% (compared to 80.8% for Opus 4.6).

- USAMO 2026 (advanced mathematics): 97.6% (compared to 42.3% for Opus 4.6).

- Cybersecurity CTF: 83.1% (compared to 66.6% for Opus 4.6).

According to information shared under Project Glasswing, Mythos demonstrated the ability to autonomously identify previously unknown vulnerabilities (zero-days) in extremely complex systems – including a 27-year-old bug in OpenBSD. However, these results are from controlled test environments and have not yet been publicly verified by independent entities.

The most important thing, however, is that Mythos is not publicly available. Anthropic, as the first player in the market since the release of GPT-2 in 2019, decided to restrict access to the model for security reasons drastically. It was provided to only about 50 selected organizations (including Microsoft, AWS, Apple, Google, Nvidia, and Cisco) under the Project Glasswing program.

What does this mean for Opus 4.7 users? According to Anthropic’s announcements, Opus 4.7’s cybersecurity capabilities were deliberately narrowed compared to the Mythos model. This is a clear design declaration: a publicly available model should be powerful, but above all, safe.

Mythos sets a new direction for the entire industry: models are becoming so advanced that it becomes necessary to deliberately limit their capabilities. This fundamentally changes the architect’s perspective – we no longer just ask “which model is best for my task,” but “which models do I even have access to, and under what conditions.” (source)

What is worth remembering?

The widely known warning that “AI sometimes makes mistakes” surprises no one in 2026. Let’s pay attention to more specific pitfalls:

- The speed of GPT-5.4 requires rigorous quality control. The model can instantly modify dozens of files. Therefore, automated tests that continuously verify the correctness of introduced changes are an essential element of the workflow.

- Opus can generate an incredibly convincing but incorrect analysis. Always verify its assumptions, especially when it relies on incomplete logs or outdated documentation.

- Migrating between model versions is not trivial. Prompts that worked perfectly with Opus 4.6 may stop working in version 4.7. Additionally, the new tokenizer changes the cost structure. Always test the model’s behavior before switching it to a production environment.

- Choosing a model is a purely engineering decision, not an ideological one. There is no point in arguing about which model is generally “better.” The key is which one better fits the task you currently have in front of you.

- Not every problem requires engaging AI. Sometimes you will write a simple bugfix yourself faster than it takes to formulate a precise prompt.

Summary

Although the scope of capabilities of both models is very similar, they differ in effectiveness depending on the specifics of the task:

- GPT-5.4 is a model optimized for speed and costs. It offers native computer use and a powerful 1.05M token context. It is available directly in GitHub Copilot and the entire OpenAI Codex ecosystem. It works perfectly when you know exactly what you want to do and simply need efficient execution.

- Claude Opus 4.7 is geared towards deep reasoning (achieving 64.3% in SWE-bench Pro per Anthropic) and excels at maintaining complex context. It is immensely popular among Cursor users and API-first tools. It is the optimal choice when you first need to understand a complex problem and plan the solution architecture.

- GPT-5.3 Codex remains the most affordable option, specialized in terminal and CLI work. It is a very justified choice for automation and scripting.

And somewhere in the background looms Claude Mythos Preview, reminding us that the models we use every day are consciously limited versions of what is already technically possible.

The key to efficiency in 2026 is not searching for a single, universal model, but rather a conscious, flexible selection of the tool for a specific problem.

Want to implement AI Agents in your team?

Are you wondering how to optimize your organization’s development processes using the latest AI models? Contact the experts at Sii Poland. We will help you choose the right tools, design a secure architecture, and train your team to maximize the potential of GPT-5.4, Claude Opus, and other enterprise-class solutions.

Let’s talk about AI in your project.

***

About data and benchmarks

The article uses official materials from model producers (OpenAI, Anthropic), internal and partner benchmarks (e.g., SWE-bench, CursorBench), and data from test programs (e.g., Project Glasswing). Some results come from controlled test environments and may differ from results in production systems.

Leave a comment