The most important shift is no longer that AI can generate code or a draft test. Both OpenAI and Anthropic are increasingly building tools aimed at real developers and quality workflows: working with code, repositories, GUIs, terminals, execution environments, and final artifacts.

For a Test Developer, this moves the question from “Can AI write a test?” to “How do we design a quality system that people and agents can use safely and effectively?”

Why this topic matters more today than it did just a few months ago

Until recently, most discussions about AI in testing focused on accelerating familiar tasks:

- generating test cases,

- suggesting assertions,

- creating Playwright boilerplate,

- or writing helper snippets.

That still matters, but it is no longer the market’s main differentiator.

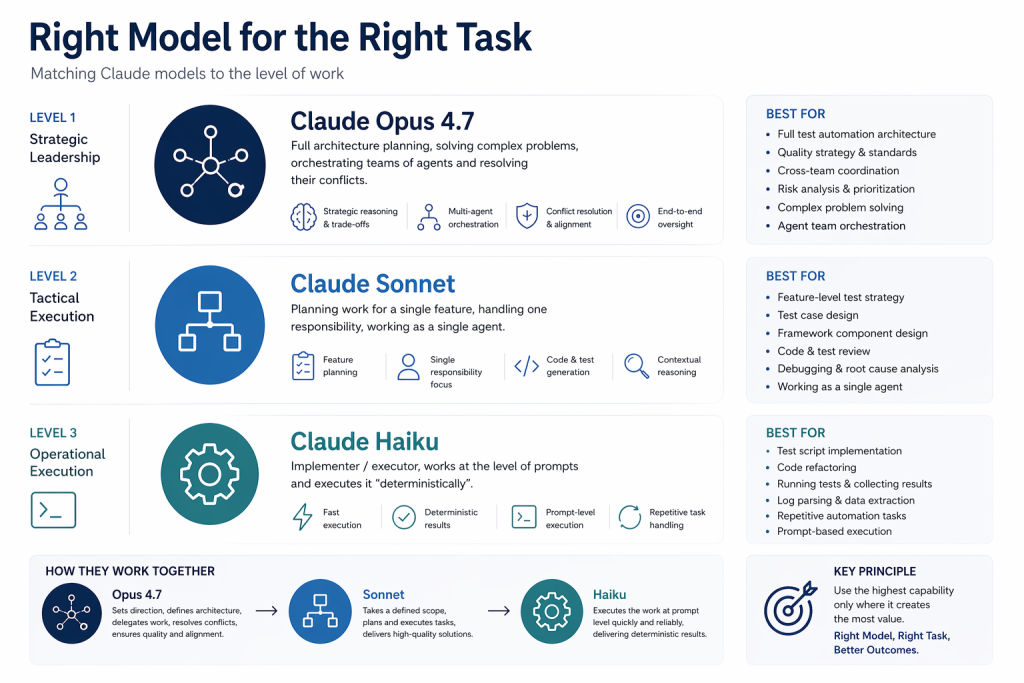

The latest releases and updates from both companies show a different level of ambition. The discussion is becoming less about “a chat that helps a little” and more about systems that can read code, execute commands, work across files, use a GUI, and support long, multi-step tasks.

From a Test Developer’s perspective, this affects not only test creation itself but also framework architecture, library maintenance, evidence gathering, governance, and how testing connects with security and risk validation.

What is changing in the work of a Test Developer

Someone responsible for a large testing framework and libraries shared across multiple applications has long been doing more than writing individual scripts. This role includes designing abstraction layers, shared standards, integrations, reporting, approaches to test data, and, increasingly, the quality of the process around the entire tooling ecosystem.

New AI tools amplify that shift. A framework now needs to be understandable not only to a developer or automation engineer, but increasingly to an agent as well. Stable contracts, consistent naming, predictable helpers, strong usage examples, and clearly described modules start to function as a “quality interface” not only for humans, but also for AI systems.

This pushes test architecture closer to platform architecture. In practice, what matters is no longer only whether a framework can test something, but also whether it can be explored, analyzed, and extended safely in semi-autonomous workflows.

From test generation to agent-based validation

The most practical change is that today’s models are increasingly moving beyond “writing” alone. They can read repositories, work across many files, execute commands, collect logs, run tests, and move through interfaces. In that sense, AI is becoming closer to an agent performing part of the validation process than an assistant suggesting a single line of code.

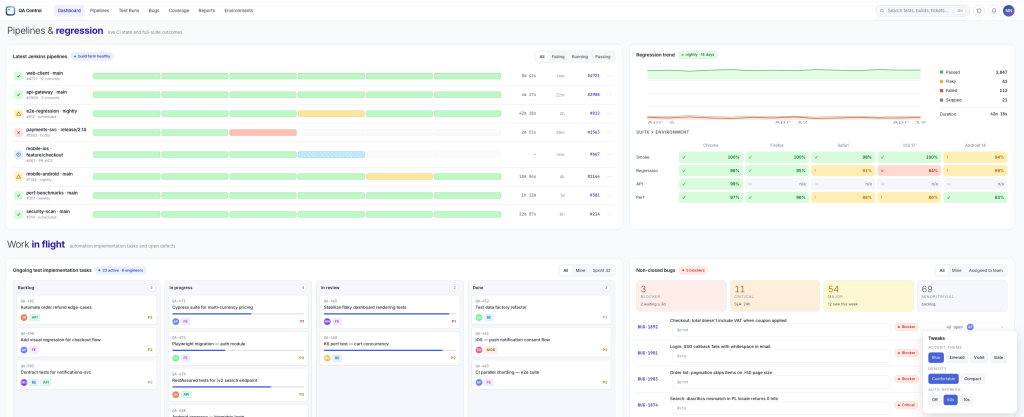

For testing, this means one workflow can combine:

- change analysis,

- coverage proposals,

- execution of selected GUI steps,

- screenshot collection,

- linking results to logs,

- and preparing a summary for the team.

That is much closer to real work in enterprise projects than the classic “write me a test in tool X.”

Where the impact will be visible first

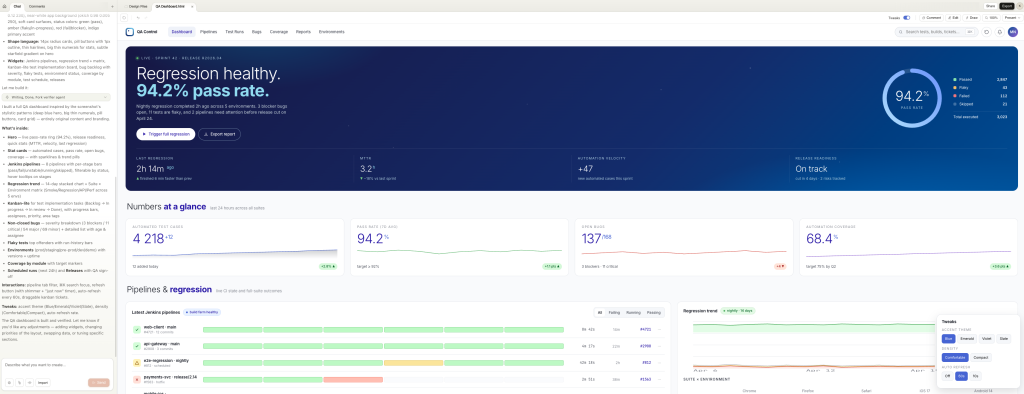

| Area | What is changing now | What it means for test architecture |

| Framework maintenance | AI can analyze dependencies, helpers, library structure, and shared implementation patterns across multiple applications faster. | Clean contracts, strong examples, and easy discoverability of framework capabilities become even more important. |

| GUI exploration and bug reproduction | Computer Use can move through the interface, gather evidence, and reproduce issues beyond the API layer alone. | Teams need a conscious distinction between agent-driven exploration and stable regression coverage. |

| Triage and evidence gathering | Models can connect logs, traces, screenshots, change history, and a likely root-cause hypothesis. | Standardizing artifacts and observability becomes even more critical. |

| Testing and security | An agent can not only execute a test, but also analyze potential abuse paths and vulnerabilities. | The boundary between quality, test architecture, and security is starting to blur. |

| Communication with stakeholders | AI can prepare summaries, slide decks, one-pagers, and visual materials faster. | Quality reporting can become more consistent and less operationally expensive. |

Codex and OpenAI’s shift toward developer work

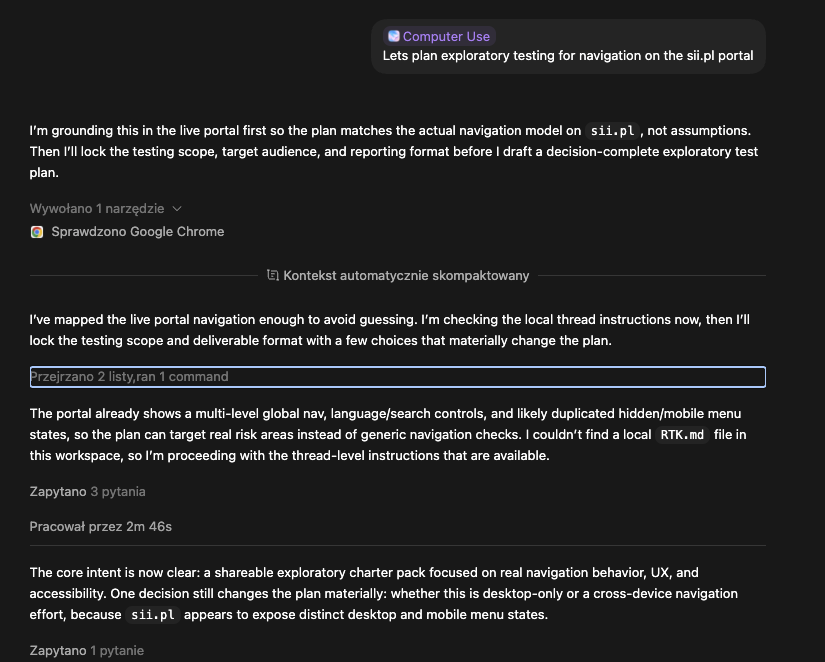

One of the most important signals of recent months is a clear shift toward tools that support everyday engineering work. This is no longer only about generating snippets of code, but about working across many files, in the terminal, in IDEs, in web environments, and on partially independent tasks. An increasingly important part of that direction is also Computer Use – the ability of AI to work not only at the code level, but also through the browser, GUI, image-based context, and visual verification of outcomes. That brings these tools much closer to real testing and quality workflows.

Another signal of that shift is the winding down of earlier initiatives that sat further away from software engineering, alongside a stronger focus on agentic, coding, and environment-aware capabilities. From a testing perspective, what matters more than the product list itself is that AI is entering the real day-to-day workflow of developers and quality teams. This means not only faster code creation, but also a greater ability to move through workflows, execute tasks in an environment, iterate based on results, and gather evidence.

From a testing perspective, this is not about a simplistic claim that one vendor is starting to look like another. The real point is that both companies are increasingly meeting in the same part of the market: AI as a layer supporting real work on code, repositories, tools, environments, and interfaces – not just a separate chat window for inspiration.

The release of GPT-5.5 further reinforces this direction. OpenAI positions it as a model for real work, with stronger planning, more effective tool use, greater autonomy in multi-step tasks, and improved performance in areas such as agentic coding, computer use, knowledge work, and research. From a testing and quality engineering perspective, this matters because it confirms the shift away from “AI for generating snippets of code” toward AI that is increasingly capable of operating across environments, tools, and longer task flows.

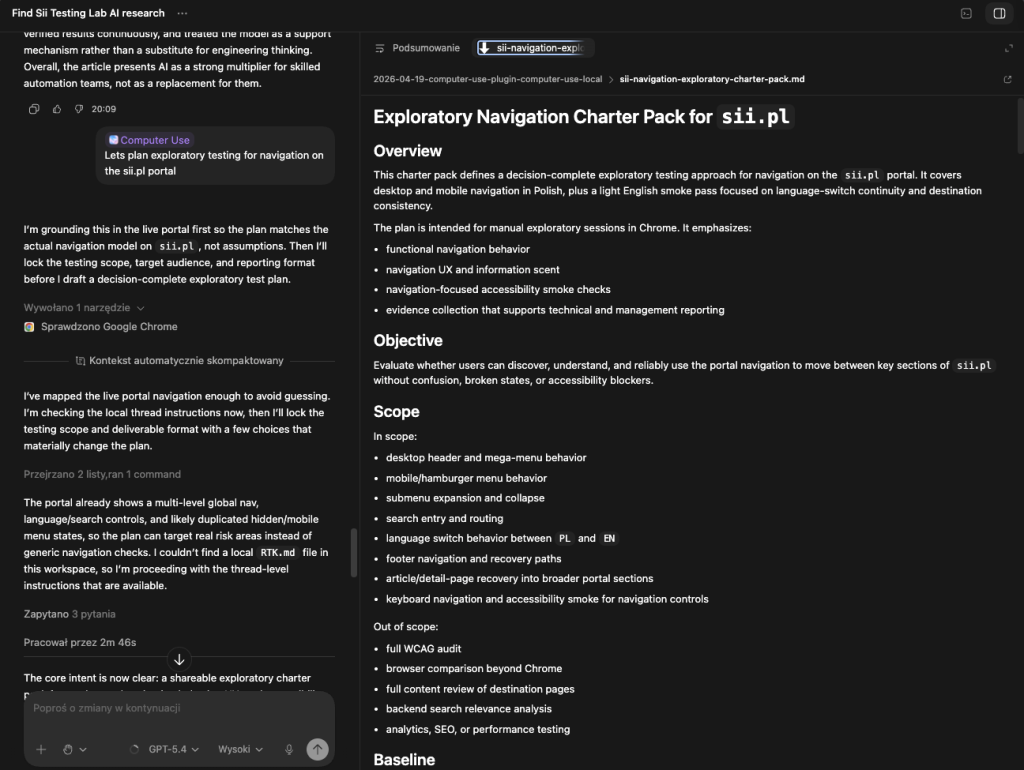

Claude Opus 4.7 and Claude Code – the next step toward long-form work

At the same time, a second market direction is developing very clearly: tools that can read a codebase, make changes across multiple files, execute commands, run tests, and maintain context across longer workflows. This is no longer just “help with code,” but a layer that is starting to resemble an executor of part of the work.

For test architects and test developers, this matters because exactly these kinds of tasks – multi-step, cross-cutting, sitting between code, tests, logs, and environments – are among the most expensive and time-consuming today. The better models become at handling this kind of work, the greater their impact on quality and on how frameworks are maintained.

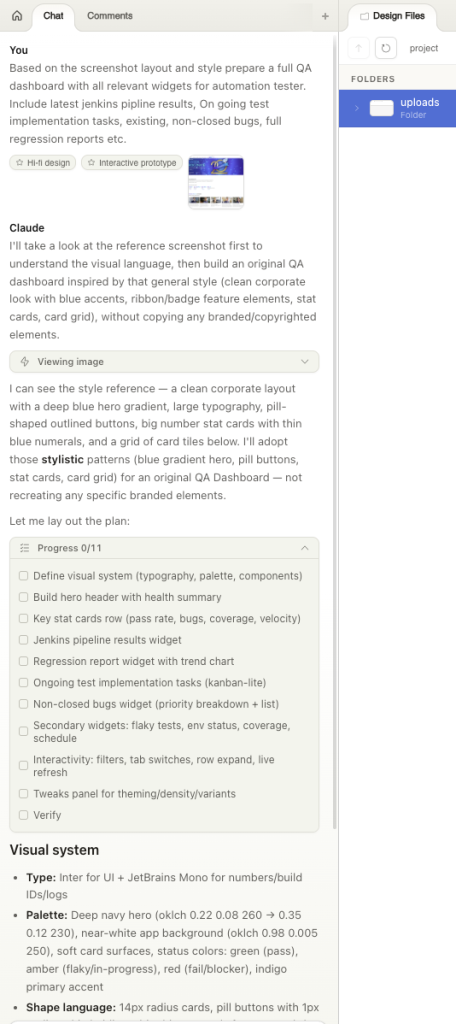

Claude Design – why it matters for testing too

Another interesting direction is the creation of more polished final artifacts: prototypes, slide decks, one-pagers, and other working materials. For testing, this may seem less obvious than work on code or GUI, but in practice, it touches an important part of the everyday responsibilities of a quality architect.

More and more work today ends not only with a test or an execution result, but also with a communication artifact:

- a test strategy,

- a risk summary,

- a release readiness summary,

- an evidence pack,

- or a presentation for stakeholders.

If AI tools start producing those artifacts well, that changes not only the way analysis is done, but also the way quality is communicated in an organization.

This also increases the importance of non-functional testing, especially in areas related to user experience and accessibility. The more teams design and evaluate at the level of interface and value communication, the greater the need to connect manual, exploratory, and automated testing in a sensible way.

Codex Security and Claude Mythos – when testing meets security

An important extension of this picture is the growing security angle. Major vendors are starting to build solutions that not only help write or execute tests, but also build system context, identify vulnerabilities, prioritize risk, and support remediation work.

For a Test Developer, this is a strong signal that the boundary between testing, risk validation, system-quality analysis, and security will become increasingly blurred. Frameworks and quality processes will need to be designed not only for test execution, but also for collaboration with agents that examine a system more broadly – from correctness to resilience and the real impact of identified issues.

Governance – why it is becoming part of quality architecture

The more an agent can act on code, GUI, terminal, or an execution environment, the more important safety boundaries and usage rules become. Vendor materials increasingly emphasize isolation of environments, sandboxing, access control, and actions that require human approval.

For organizations, this means governance can no longer be treated as a layer added “after everything else.” It has to become part of the core of test and quality architecture. Teams need to define which accounts agents work on, which environments they may touch, which actions are automatic, which require a human in the loop, how their steps are logged, and where the evidence of completed work is stored.

How does this affect testing right now?

In the short term, the biggest gains will appear where work is multi-step, context-heavy, and time-consuming. That includes change analysis, understanding unfamiliar code, adapting tests after UI changes, reproducing defects, connecting logs and screenshots with likely root-cause hypotheses, and preparing final materials for teams and stakeholders.

At the same time, a one-off successful agent run should not be confused with a valuable, stable regression asset. Challenges remain around reliability, test data, assertion quality, access to environments, prompt-injection risk, and the ability to judge whether an output actually makes business sense. That is why the role of the quality architect is not shrinking – it is simply moving to a higher level of responsibility.

Conditions for success and key limitations

It is important to say clearly that increased model capability does not automatically mean mature adoption in quality engineering. Teams will get the greatest value where AI is embedded in a well-designed framework, predictable libraries, controlled environments, and a sensible review process. Without that, even a highly capable agent may accelerate work only on the surface – producing results faster, but not necessarily in a way that is stable, maintainable, and safe enough.

The limitations remain real. The quality of test data, the choice of assertions, access to environments, prompt-injection risk, control over actions performed by an agent, and the team’s ability to judge whether an output makes business sense still matter.

That is exactly why the role of the human does not disappear from the process. What changes is its nature: less manual execution of each step and more deliberate design of collaboration among people, tools, and agents.

Where is all of this heading

The most interesting thing about the current market direction is not that models are getting better at writing code. More importantly, they are increasingly participating in work carried out on code, tools, interfaces, and environments. That is where the everyday practice of a Test Architect is beginning to change.

Where AI was once associated mainly with support in writing tests, it is now clearly influencing the way the entire quality system is designed: from implementation, through analysis and exploration, to governance, security, and communication of outcomes.

The greatest value will come to teams that treat AI not as a simple accelerator, but as a new layer supporting a deliberately designed quality architecture. Humans will not disappear from the process. Their role will increasingly involve designing collaboration between people, tools, and agents.

The release of GPT-5.5 further confirms this direction. OpenAI presents it as a model for real work, with clear gains in agentic coding, computer use, tool use, and knowledge work, which fits the broader shift described in this article: AI is becoming less of an “idea chat” and more of a layer supporting real engineering and quality workflows.

Final conclusion

The most interesting thing about the current market direction is not that models are getting better at writing code. More importantly, they are increasingly participating in work carried out on code, tools, interfaces, and environments. That is where the everyday practice of a Test Developer is beginning to change.

If just a few months ago AI could still be described mainly as support for creating tests, today it is increasingly about designing a quality system in which AI supports not only implementation, but also analysis, exploration, security, governance, and communication of results. This shift is much more important than improvement in code generation alone, and it may have the strongest impact on testing in the years ahead.

Sources

- OpenAI – “Introducing upgrades to Codex“

- OpenAI – “Codex Security: now in research preview“

- OpenAI Help Center – “Codex Security”

- OpenAI Help Center – “What to know about the Sora discontinuation”

- Anthropic – “Introducing Claude Opus 4.7”

- Anthropic – “Introducing Claude Design by Anthropic Labs”

- Anthropic – “Claude Code by Anthropic” and Claude Code overview documentation

- Anthropic – Project Glasswing and model documentation referencing Claude Mythos Preview

Leave a comment