Recently, the band Coma made a comeback after years to play a few shows. Their resurrected popularity heated the fans to a fever pitch… Finally, zero-hour strikes. Thousands of fans are refreshing the page. Tickets vanish in the blink of an eye, like toasters on Black Friday. There’s only one left, and both Adam and Eve see it. They both quickly click “Reserve and Pay.” Click! The page reloads, a loading spinner appears… and behind the scenes, in the server room, a drama unfolds. A drama that will determine whether it’s Adam, Eve, or neither of them who will get to sing their favorite song in front of the stage… And if they’ll even find out what happened.

In that fraction of a second, the system has to decrement the ticket count, create a reservation, and process the payment. But what if both requests ensure that the last ticket is still available? Should two people be charged? Or what if the external payment provider returns an error, or simply times out?

If the ticket has already been reserved but the payment fails, neither Adam nor Eve gets it, but no one else can buy it. It’s frozen in a digital limbo. This is a scenario that gives everyone nightmares – not just Coma fans, but us developers, too.

So, how do you design a system that gracefully handles situations like these? The answer lies in combining the transactional guarantees of modern databases with design patterns crafted for the world of distributed systems.

In this article, we’ll analyze the code of a demo project to see how to do it right.

After reading this article, you’ll know

- What the fundamental transactional mechanisms in MongoDB are.

- How to handle race conditions using snapshot isolation.

- How to combine strong atomicity guarantees (ACID) with patterns that provide eventual consistency within a single business process.

- What the Transactional Outbox pattern is and how it solves the problem of unreliable communication in distributed systems.

- How to manage multi-step processes using the Saga pattern with a choreography model.

- How to ensure correct and efficient background job processing in a multi-instance environment.

- What eventual consistency is and why it’s a conscious architectural trade-off.

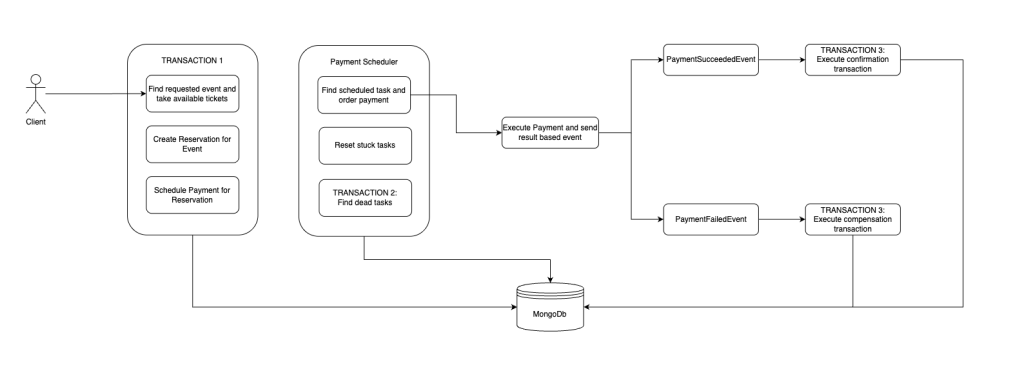

Architecture

Let’s start with a high-level overview of the architecture we’ll be implementing in this demo. Of course, the full code is available for you to download and run yourself.

At first glance, we can see three main acts of this process:

- Atomic order placement: Everything starts with a customer action that initiates a single, indivisible transaction. Within this operation, the system simultaneously reserves resources (e.g., tickets), creates a reservation document, and – crucially – saves a payment task in the database. This last step is the heart of the Outbox Pattern, which will be discussed further later on.

- Asynchronous payment processing: An independent component called the Payment Scheduler operates in the background. It works like a postman, regularly checking the database for scheduled tasks. When it finds such a task, it attempts to process the payment by communicating with an external system. Upon receiving a response, an event corresponding to the result is published: PaymentSucceededEvent or PaymentFailedEvent.

- Finalization or Ccmpensation: The system reactively listens for the published events. A successful payment triggers a confirmation transaction that finalizes the reservation. A failure, on the other hand, initiates a compensating transaction that “cleans up” after the failed operation – for example, by canceling the reservation and releasing the previously held tickets, thus restoring the system to a consistent state. This is where the philosophy of the Saga Pattern gives us a little wink.

Implementation

It all begins with a simple HTTP request that hits our controller. We see a standard endpoint that accepts the request and delegates all the work to a service. Note the HttpStatus.ACCEPTED status – it’s a subtle hint to the client that their request has been accepted for processing, but the process isn’t finished yet.

All or nothing

@RestController

@RequestMapping("/reservations")

class ReservationController(private val reservationService: ReservationService) {

// ...

@PostMapping

fun createReservation(@RequestBody request: ReservationRequest): ResponseEntity<ReservationResponse> {

logger.info("Received reservation request: $request")

return ResponseEntity.status(HttpStatus.ACCEPTED) // Przyjęto do realizacji

.body(reservationService.createReservation(request).toReservationResponse())

}

}

From here, we move to the service, which begins a sequence of atomic steps:

class ReservationService(...) {

@Transactional

@Retryable(value = [DataIntegrityViolationException::class], maxAttempts = 3, backoff = Backoff(delay = 2000, multiplier = 2.0))

fun createReservation(reservationRequest: ReservationRequest){

val requestedEvent = eventRepository.findById(reservationRequest.eventId)

.orElseThrow { EventNotFound() }

.toModel()

if (requestedEvent.areTicketsInsufficient(reservationRequest.ticketCount)) {

logger.error("Not enough tickets available for event: ${requestedEvent.eventId}")

throw NotEnoughTicketException()

}

val reservedTickets = requestedEvent.reserveTickets(reservationRequest.ticketCount)

eventRepository.save(requestedEvent.toDocument())

logger.info("Reserved tickets: $reservedTickets for event: ${requestedEvent.eventId}")

val reservation = reservationRepository.save(

ReservationDocument(

eventId = reservationRequest.eventId,

userId = reservationRequest.userId,

ticketCount = reservationRequest.ticketCount,

reservedAt = Instant.now(clock),

reservationStatus = Reservation.ReservationStatus.PENDING.name

)

).toModel()

logger.info("Created reservation: $reservation for user: ${reservationRequest.userId}")

paymentOutbox.save(

PaymentOutboxTask(

paymentStatus = PaymentStatus.SCHEDULED.name,

paymentRequest = reservation.toPaymentRequest(reservationRequest, reservedTickets),

createdAt = Instant.now(clock),

)

)

logger.info("Payment outbox task scheduled for reservation: ${reservation.reservationId}")

}

The @Transactional annotation from Spring Data MongoDB is our promise to the system: all database write operations within this method (eventRepository.save, reservationRepository.save, paymentOutbox.save) must succeed as a single, indivisible unit. If any step fails (e.g., a write conflict because someone else bought the ticket at the same moment), the entire transaction will be rolled back. The database state will revert to how it was before, as if Adam’s or Eve’s request had never arrived. This is our atomicity in action.

The @Retryable annotation

However, @Transactional is only half the story in the race for the last ticket. The crucial complement is the @Retryable annotation, which solves the problem of concurrent write attempts. To fully understand the mechanism at play here, we must look under the hood of MongoDB transactions. The Race for the Last Ticket: MongoDB’s Strategy and @Retryable in Action

Let’s imagine what happens when Adam and Eve click the button in the same millisecond:

- Two parallel realities: The system starts two separate transactions, and each creates its own “snapshot” of the database state at that moment. In both of these snapshots, there is one available ticket. Importantly, one transaction does not block the other’s read. Operating on their own copies of reality, both reach the same conclusion: “Awesome, the last ticket is free – I’m reserving it!”

- “First writer wins” and an Immediate Conflict: Let’s assume Adam’s transaction is a fraction of a second faster and is the first to execute its write operation (eventRepository.save). Even though the transaction has not yet been committed, MongoDB has placed a temporary write lock on the modified document. When Eve’s transaction, operating on its (now outdated) snapshot, also attempts to write to the same document, it immediately encounters this lock.

- Fail Fast: Eve’s transaction doesn’t wait until the end. It knows right away that another operation has already “claimed” this document, so it immediately aborts her transaction by throwing a write conflict error (WriteConflictException), which is often mapped to DataIntegrityViolationException in the Spring world.

- Automatic self-healing: This is precisely where @Retryable comes into play. Instead of just returning an obscure technical error to Eve, the mechanism catches the conflict exception and says, “No worries, this is a common issue under heavy load. Let’s try that again!” The entire createReservation method for Eve is executed again.

- A new, updated reality: Eve’s transaction creates a new data snapshot on the second attempt. This time, there are no more available tickets in the database – they were correctly reserved by Adam. The logic within the method (if (areTicketsInsufficient…)) immediately detects this, and the transaction now finishes in a controlled manner, with a clear business message for Eve: “Sorry, the tickets are no longer available.”

Thanks to @Retryable, the system self-heals from a transient technical issue and transforms it into a clear and truthful business response: “Sorry, someone was faster.”

This mechanism:

- Prevents overselling and data chaos.

- Ensures business consistency even under heavy load.

- Provides a significantly better user experience, building trust in the platform.

In short, it’s a bridge that connects the technical strategy for handling concurrency conflicts with a smooth and logical experience for the end user.

The reliable mailman – the Outbox pattern

Notice that the transaction above does not directly call a payment API. This is intentional. Calling an external service during a database transaction is asking for trouble. Such a service might respond slowly, locking up valuable database resources, or it might be unavailable, which would cause our entire transaction to roll back, even though the ticket reservation itself was possible.

Instead, we use the Outbox pattern. Within the same transaction, we create all the necessary reservations and records, and at the end, we save a task that is meant to be executed only after the transaction is committed. This allows us to avoid the problems described above and ensures that the customer will be charged if and only if all the necessary resources were successfully reserved for them atomically.

paymentOutbox.save(

PaymentOutboxTask(

paymentStatus = PaymentStatus.SCHEDULED.name,

paymentRequest = reservation.toPaymentRequest(reservationRequest, reservedTickets),

createdAt = Instant.now(clock),

)

)

Since this write is part of the atomic transaction, we have an ironclad guarantee: the payment task also exists if the reservation was successfully created. We’ve shifted the burden of immediate payment processing, trading it for a guaranteed “to-do” item for the future.

For a broader context, I invite you to read: Transactional Outbox Pattern.

Asynchronous processing and saga choreography

Now it’s time for the PaymentOutboxScheduler – a component that runs in the background and regularly checks our “outbox.”

@Component

class PaymentOutboxScheduler(

private val paymentOutboxRepository: PaymentOutboxRepository,

private val paymentService: PaymentService

) {

private val logger = logger {}

@Scheduled(fixedDelayString = "PT5S")

fun processPaymentOutbox() {

val startedTask = paymentOutboxRepository.findAndStartPaymentTask() ?: return

logger.info("Started payment outbox task: $startedTask for processing")

val processedTask = paymentService.process(startedTask)

paymentOutboxRepository.save(processedTask)

}

@Scheduled(fixedDelayString = "PT90S")

@SchedulerLock(name = "outboxPaymentTaskCleanupLock", lockAtMostFor = "5m", lockAtLeastFor = "30s")

fun cleanupStuckTasks() {

paymentOutboxRepository.resetStuckTasks()

paymentOutboxRepository.findDeadTasks().forEach { paymentService.notifyPaymentResult(it) }

}

}

The @Scheduled annotation makes Spring regularly execute the processPaymentOutbox method. Its job is to:

- find a task that’s ready to be processed,

- pick it up, a

- nd then actually attempt to charge the customer in paymentService.process(startedTask),

- emit the appropriate event to the system,

- Finally, save the task as completed.

It’s worth spending a moment on the method that finds the tasks. Under the hood, it uses the findAndModify method at the repository level. This operation is designed to be a single atomic action on the document it finds, which is precisely what protects us from a situation where more than one instance of our application might try to pick up the same task from the database.

Architect’s note: The database poller pattern periodically scans a collection is an elegant and simple solution. However, it’s worth remembering that such scanning can become a bottleneck in systems with extremely high throughput (thousands of tasks per second). In those scenarios, one might consider using dedicated, specialized messaging systems (like Kafka or RabbitMQ). For most cases (especially in a demo), the solution shown here is an ideal compromise between implementation simplicity and performance.

In contrast, a different approach is used in the second scheduled method, cleanupStuckTasks.

This sort of supervisor’s job is to run at a much lower frequency and find tasks that, for some reason, have gotten stuck in the PROCESSING state. They are then reset to SCHEDULED so they can be picked up again. This is a crucial self-healing mechanism, but it requires one fundamental guarantee from the external payment system: idempotency.

What if a task was completed – the payment was charged – but our service crashed before updating the status in the outbox? Reprocessing the reset task must not lead to double-charging the customer. That’s why our payment request must include a unique idempotency key (idempotencyKey), which ensures that the payment provider will process a given transaction only once, regardless of how many times we send the request. This mechanism also handles “dead” tasks that have failed multiple times – it eventually marks them as failed and emits a payment failure event to release the associated resources.

The difference between these background processes also lies in how they handle concurrency in a multi-instance environment. cleanupStuckTasks operates on multiple documents. To do this safely, we need a mechanism to ensure that only one instance can execute the job at a time. This is a common challenge, which is why ready-made solutions like ShedLock exist. The @SchedulerLock annotation acts just like a lock, creating an entry in a special database collection. This prevents other instances from acquiring the lock and running the same job, giving us certainty that the cleanup process will run only once per cycle.

The verdict confirmation or compensation

The ReservationService listens for two types of events that result from the payment attempt. Each of them initiates a separate, atomic transaction that constitutes the final act of our business process.

Positive scenario – payment succeeded:

@EventListener

@Transactional

fun handleSuccessfulPaymentEvent(event: SuccessPaymentEvent) {

reservationRepository.confirm(event.reservationId)

eventRepository.confirm(event.eventId, event.ticketIds)

logger.info("Payment successful...")

}

When the payment succeeds, a new transaction is started that finalizes the process. It changes the reservation status from PENDING to CONFIRMED and the tickets’ status to SOLD. From this moment on, the ticket officially belongs to Adam or Eve.

Negative scenario – payment failed:

@EventListener

@Transactional

fun handleFailedPaymentEvent(event: FailedPaymentEvent) {

reservationRepository.cancel(event.reservationId)

eventRepository.release(event.eventId, event.ticketIds)

logger.info("Payment failed... tickets released")

}

If the payment fails, a compensating transaction is executed. This is a key element that restores order in the system. The reservation is canceled, and most importantly, the tickets are released (eventRepository.release) and return to the public pool, ready to be purchased by someone else.

Why is this a Saga?

This multi-step process is a simple implementation of the Saga pattern, a mechanism for managing data consistency across distributed systems without needing long-running, blocking distributed transactions, which are often impractical and scale poorly.

The reservation process fits perfectly into the definition of a Saga, because:

- It consists of a sequence of local transactions that together form a complete business process:

- A transaction reserves the ticket and creates a task in the outbox.

- A scheduled operation processes the payment.

- A transaction confirms the reservation or cancels it.

- It includes compensating transactions: If any of the steps after the first transaction fail (e.g., the payment fails), the Saga triggers compensating actions (handleFailedPaymentEvent) that undo the effects of previous steps. In our case, this means releasing the tickets and canceling the reservation.

- It ensures business-level consistency: Although the system is not consistent at every millisecond (there is a PENDING state), the Saga guarantees that the entire business process will end in one of two consistent states: either the reservation is fully paid and confirmed, or it is canceled, and the tickets are returned to the pool. The system cannot get stuck in an inconsistent intermediate state.

Saga coordination

At this point, it’s worth mentioning the two main Saga coordination approaches:

- Choreography: This is the model used in this scenario. Individual services (or components) subscribe to events emitted by others and react to them by performing their part of the work. There is no central coordinator. Communication is decentralized, which works very well for simpler workflows.

- Orchestration: In this model, a central component (an orchestrator) directs the entire process, instructing each service on what steps to perform. This approach is better suited for more complex Sagas with many steps and intricate conditional logic.

The choreography model is ideal for ticket reservation due to its simplicity and resilience. However, as the number of steps in a business process grows, the orchestration model often becomes easier to manage, monitor, and debug.

A deal with time, or, what is “eventual consistency”?

It’s worth pausing for a moment to understand a significant trade-off we’ve made. Some time passes from the moment a user clicks “Buy” until their reservation is finally confirmed or canceled. During this period, the system is in a transitional state: the ticket is reserved, but the reservation has a PENDING status.

This is eventual consistency. We guarantee that the system will eventually reach a consistent state, but it won’t necessarily happen instantly. For the business, this means the user might see a message on their screen like “Processing your reservation, please wait…” instead of an immediate confirmation. This is a deliberate architectural decision. In exchange for this brief period of uncertainty, we gain a system that is incomparably more resilient to failures and scales much better.

OUTRO

We’ve followed a request’s journey from a single click through a network of transactions and events to a final, consistent state. We’ve created a resilient and flexible workflow instead of building a monolithic, brittle process.

We combined the hard guarantees of atomic transactions in MongoDB to ensure data integrity at the critical moment of reservation with the asynchronous reliability of the Outbox and Saga patterns. This approach allows us to build complex business processes with the peace of mind that a temporary outage of an external service or a crash of our own application won’t leave our data in an inconsistent state. This is what the foundations of modern, robust backend systems look like.

Post-credits scene

The story of Adam and Eve has concluded, the system works, and the tickets have found their owners. The credits roll. But if, like a true fan, you’ve stayed in your seat until the very end, there’s one more treat for you – a look behind the curtain at how this all really works.

Bonus 1: Why do transactions require a Replica Set?

The answer is simple and elegant: transactions were built on the foundation of a mechanism that has existed in MongoDB for years – replication. At the heart of every Replica Set is the oplog (operations log), a chronological journal of all write operations. Secondary nodes “tail” this log to replicate changes and maintain consistency with the primary node.

MongoDB’s engineers leveraged this existing, battle-tested, and incredibly resilient mechanism when designing transactions. The oplog records every step of a transaction, its progress, and its final decision (commit or rollback). It becomes the single, authoritative source of truth about the transaction’s state.

Bonus 2: How can an ACID transaction be distributed?

The key is understanding that the transaction’s atomicity is guaranteed and coordinated in one place: on the primary node. “Distribution” in this context doesn’t mean negotiating the transaction’s outcome among nodes. It means propagating a consistent, atomically committed result to ensure high availability and durability.

Bonus 3: The readPreference trap and the supremacy of the primary

To optimize performance, MongoDB often uses the readPreference setting. It’s an instruction to the driver telling it which node in the cluster to read data from. A popular choice is “nearest”, which means querying the geographically closest server, regardless of whether it’s a primary or a secondary.

But here lies a trap. Let’s say we’ve globally set our readPreference to “nearest”. Why do operations within our transaction suddenly fail or slow down?

Once again, the answer lies in consistency. A transaction must operate on a perfectly consistent and up-to-date data snapshot to fulfill its ACID promise. Secondary nodes, by their nature, replicate data with a slight delay. If a transaction were allowed to read from a secondary, it would risk operating on stale data, which could lead to costly errors.

Therefore, the MongoDB engine imposes an ironclad rule: all operations inside an active transaction, both reads and writes, must be directed to the primary node. For the duration of the transaction, the global readPreference setting is ignored in favor of absolute consistency. The configuration for this is shown in the attached repository.

Leave a comment