Artificial intelligence transforms how we build applications, offering new personalization, automation, and data analysis possibilities. Specifically, models like OpenAI’s GPT-4o are revolutionizing natural language processing (NLP), enabling the creation of intelligent systems for conversations, text analysis, and content generation.

This article aims to answer the following questions:

- How can a Spring-based application be easily and scalably integrated with AI?

- Are popular LLMs just glorified internet search engines?

- What is Spring Functions, and can a bean be… a function?

- What is prompt enrichment?

If you don’t have answers to even one of these questions, you are warmly invited to explore this article.

AI integration with applications

As a foundation for modern applications, the Spring Boot ecosystem becomes an ideal platform for integrating such models. Before diving deeper, however, let’s take a broader look at the current use of AI.

Popular models like GPT-4o are primarily accessed through graphical interfaces, such as ChatGPT. Users visit a website and type a query in a text box, and the model returns an answer in a chat window. This simple and intuitive approach has revolutionized human-technology interaction.

However, when designing more complex applications where an AI model becomes a core part of the system’s architecture and functions as an integral part of the backend, communication with the model must occur programmatically. While the graphical interface is a convenient starting point, it doesn’t support direct integration with microservices, REST APIs, or cloud infrastructure. In other words, automation, scalability, and seamless system integration are essential in the software world.

This brings us to the key topic – AI integration with applications. With such integration, we can build applications that, among other things:

- handle real-time customer queries (popular chatbots embedded in client domains),

- analyze documents or customer reviews, automatically generating summaries or reports,

- intelligently process batch data, analyzing hundreds or thousands of documents for trends at speeds unattainable by humans,

- enrich communication with the model by augmenting prompts with additional data sources such as REST APIs, databases, or message queues,

- analyze IoT data streams in real-time to trigger alerts based on unusual patterns.

The key takeaway is that integrating AI with applications is not meant to replace chatbots like ChatGPT – it fulfills different needs. This is not about competition but rather about complementary synergy.

Demonstration scenario

Having laid the groundwork for how AI interacts with applications, let’s examine a demonstration of such integration. The scenario involves a user seeking recommendations for the latest science fiction books. To make the interaction as seamless as possible, the system allows the user to submit the query as an audio recording, creating an almost conversational experience with the application.

Our task is to receive the audio recording, extract the query (prompt) from it, query the AI model, and then return the response to the user. What might initially seem trivial becomes more challenging once we understand how LLMs work.

Specifically, the model has no internet access – it relies on a pre-trained dataset it was built on. Querying it for the latest science fiction books is therefore doomed to fail, as shown below:

This is a fundamental limitation stemming from the model’s architecture and training. Put simply, LLMs do not function as internet search engines.

GPT-4o as an example

GPT-4o is a statistical model trained on a massive dataset up to a specific point in the past. After training, the model is “frozen”—it does not dynamically learn new data in real time or retrieve information independently. Unlike search engines or dynamic applications, it cannot communicate with external information sources during runtime.

This limitation, however, is intentional. It ensures better control over the model’s functionality, as the training process is supervised. Interestingly, this design choice is not to prevent the model from becoming an omnipotent sci-fi-like entity but rather to maintain the high quality of its carefully curated training dataset. Allowing it to access the open internet would expose it to low-quality, unstructured data. Additionally, privacy and performance considerations further justify the model’s lack of internet access.

Thus, when designing systems that rely on real-time data, we must employ a technique called “prompt enrichment,” which augments the prompt with context that allows the model to infer answers. In our scenario, this means ensuring access to a reliable data source for the latest sci-fi books and incorporating that data into the prompt.

Implementation

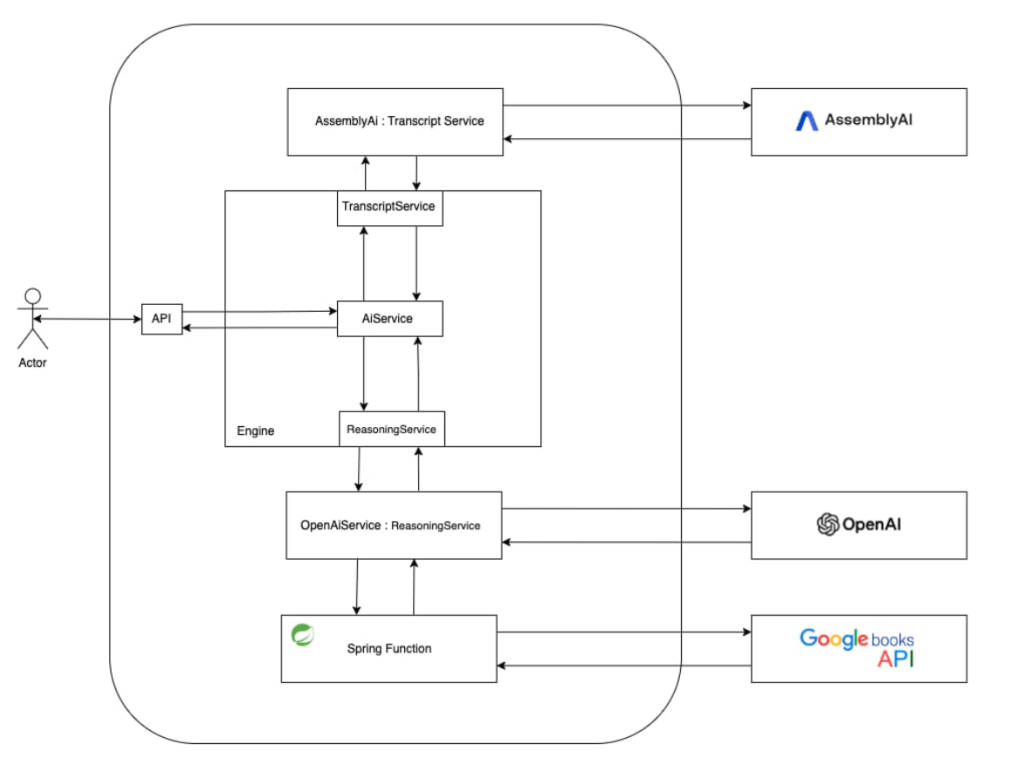

The task described earlier is solved by the architecture shown below:

By leveraging the abstraction layer provided by the TranscriptService and ReasoningService interfaces, we ensure that the application’s domain logic remains decoupled from a specific model, which here is treated as just an implementation of the problem-solving mechanism we are interested in.

In the above scenario, the responsibility for extracting text from an audio file is delegated to AssemblyAI, a solution specialized in this task. Additionally, this choice was made because of their attractive API usage plans. By diversifying responsibilities this way, we can save on using more resource-intensive models. Resources allocated to GPT integration are focused solely on reasoning and analyzing prompts. Moreover, it is particularly important to consider the costs generated by using these models.

For the functionality of retrieving information about sci-fi books, Google Books API and Spring Functions were utilized. Spring Functions is part of the Spring ecosystem, allowing the creation of lightweight, reusable functions designed to perform a single, clearly defined task while encapsulating all the logic needed for its execution.

What’s particularly interesting is that these functions are registered as beans, enabling their reuse in multiple application parts. This approach is further enhanced by Spring Cloud, which supports highly efficient serverless solutions, such as those deployed with AWS Lambda.

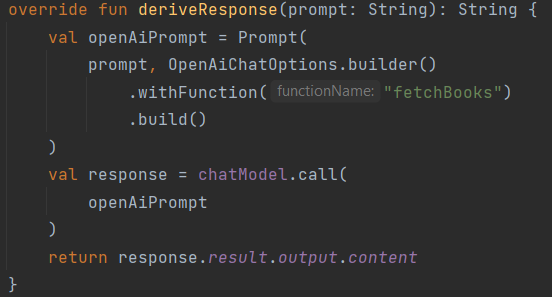

In our scenario, Spring also supports leveraging these functions by registering them within the prompt we are constructing as follows:

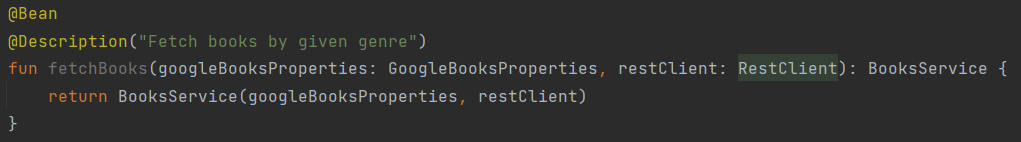

where “fetchBooks” is the name of our function and looks as follows:

I encourage you to explore and analyze the full code in the dedicated repository.

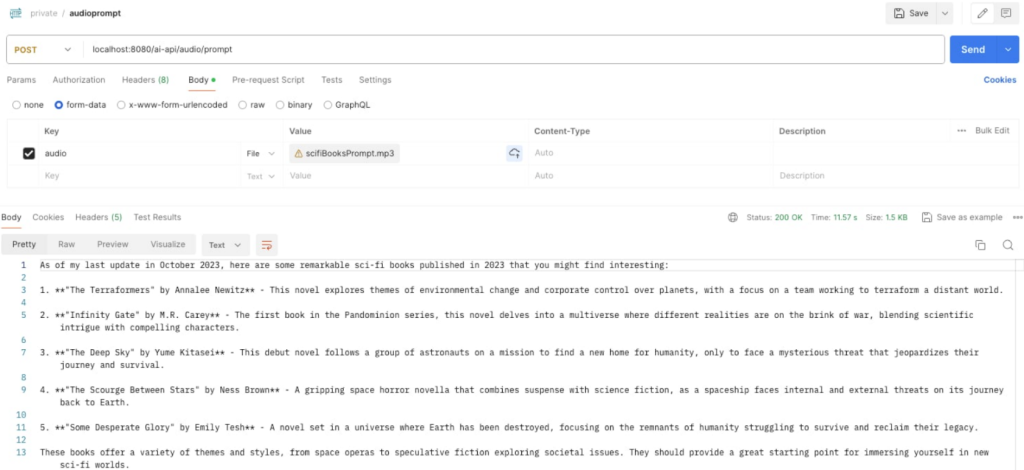

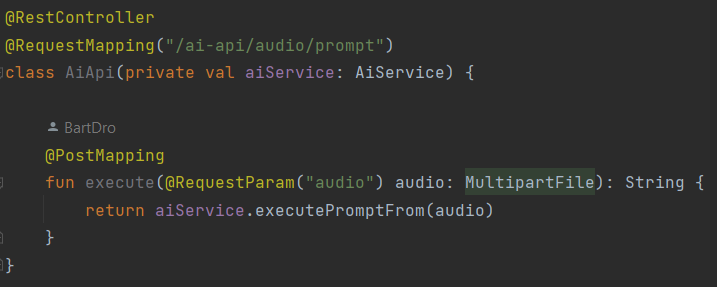

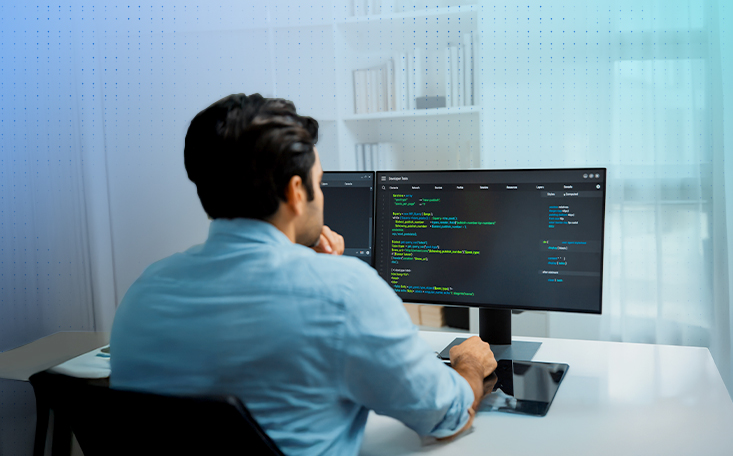

Once this architecture is implemented, we can query the system based on the scenario we’ve outlined by sending a request to the following endpoint:

this will result in the following response:

Evidently, the publication dates are beyond the model’s last update date, and the included URLs point to a Google domain. This demonstrates that the model provided a contextually enhanced prompt, enabling it to analyze the information and provide a well-informed response.

Conclusion

While exploring the possibilities of integrating artificial intelligence with Spring Boot-based applications, we demonstrated how language models like GPT-4o can be enriched with external data sources to overcome their inherent limitations.

The key takeaway is understanding that models like GPT-4o lack native internet access due to their architecture and design constraints. In our scenario, where a user seeks recommendations for the latest science fiction books via an audio query, the challenge was the absence of up-to-date data. The solution involved leveraging “prompt enrichment,” where data from the Google Books API was incorporated into the prompt, enabling the model to generate a response based on current information.

This example highlights how Spring Boot and its ecosystem support the development of modern applications that are flexible, scalable, and tailored to dynamic user needs. Implementing such an architecture allows us to build systems capable of processing complex data, performing real-time analysis, and interacting with diverse data sources. As a result, users can experience smarter and more personalized applications that truly address their needs.

This example is evidence that well-designed integration between AI and Spring Boot can deliver tangible value by combining the strengths of AI models with the robustness of proven backend tools. It’s an ideal approach for creating applications suited to today’s technological challenges.

It’s important to remember that such systems are not intended to replace humans but to support them, enabling focus on more meaningful tasks. This approach brings AI and backend technology together in harmony, delivering solutions that meet real user needs while simplifying the work of developers. This is the beginning of a new era where technology, data, and intelligence work together more naturally than ever before. How we use this potential and the practical solutions we build will define the future.

***

If you’re interested in AI, be sure to also check out other articles by our experts.

“””Great thoughts! This blog covers the combination of Spring Boot and AI, emphasizing programmatic communication with AI models for scalability and automation. It highlights what models such as OpenAI’s GPT-4o can do in natural language processing and building intelligent systems capable of conversations, text analysis, and content generation. The blog also introduces concepts such as Spring Functions and prompt enrichment, offering practical knowledge for developers wanting to build intelligent applications.

If you know more about Spring AI, you can visit: https://mobisoftinfotech.com/resources/blog/ai-development/spring-ai-llm-integration-spring-boot“””