Git is a relatively young Version Control System (VCS), that started in 2005. In less than 20 years it became the most popular tool in this category. Stack Overflow asks its users in the annual survey among others about the tools used. If we believe their results, then Git’s market share is currently over 90%. Despite this, many of its users bring it down to git fetch, git commit, and git push. And in general, that is good. It means that it is simple enough to be widely used even if the users are not involved in what is behind it.

In this article, I want to talk about more advanced and not obvious possibilities that this VCS brings to us. We would need to explain some details of how Git is organized and how it works. Then we will see what opportunities it brings and what are their consequences. I encourage everyone to expand their knowledge, but at the same time, I assume you already used Git at least an intermediate level.

Hashes

Depending on your experience with Git, you probably already noticed that each commit has its hash. Hashes are widely used in Git, not only with commits. If you already know it, you know how they are calculated, and how they are changing (even if they are constant), then you can go directly to the “An unusual example” section.

If you are still here, it probably means that you are curious about the last part of the last sentence. So how is it? Are hashes constant or are they changing?

To answer this question let us focus for a moment on another question: What the hash actually is? The hash is a result of hash function computation. Then, what is the hash function?

A hash function is any function that can be used to map data of arbitrary size to fixed-size values, though there are some hash functions that support variable length output.

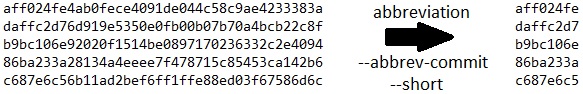

Hash in Git

And what is the hash in Git? Hashes in Git are the results of a widely known SHA-11 function (and if you are a visual person see the examples of it in Figure 2). So, the results will vary widely even if the input varies only slightly. Now, what the input for Git commit hash exactly is? It is a result of a few-steps computation. First, there are hashes of the changed files computed. Then, there is a calculation for a hash (tree) which is a combination of all these files. Finally, we have the commit details, which are:

- Author and committer2 names and emails,

- Creation and delivery3 dates and times,

- Commit message,

- Parent and tree (so the previously calculated hashes).

A set like this means that it is nearly impossible to create a commit that will have the same hash as another one. The only way is to provide the same changes (or no changes) to the files and manually manipulate dates to deliver at the same time.

The hash is calculated at the commit time. It cannot be predicted, it cannot be changed, and it cannot be set to some desired value. After all, the hash for a commit is constant, and if you are still curious how it can change, the answers will come (precisely in the “An everyday example” section).

1 The newest versions of Git introduce the usage of SHA-256, however, it is not commonly used yet.

2 In Git the author of the changes need NOT be the same person as the provider of them.

3 In Git changes can be delivered at a different time than their creation (this is quite common with rebases, which will be described later).

Small digression

Now that we know it all, we can make a small digression, which will be as well the first not obvious usage of Git. You can commit your changes at any time you want. It means in the past and the future, whatever you like. As long as there are no commit hooks, that verify entries like this in your repository, all you need to do is to set and export variable GIT_COMMITTER_DATE=<value>, and add switch --date=<value> to your git commit statement. You can also change the author of the changes in a similar way. There is even an automated script available on GitHub for it.

But any time, for any reason, you will consider doing things like this, take the last line of that script into account and think it over. Remember also that changes like this introduce consecutive changes in commit hashes for the changed entry and all others based on it, according to what was already explained about how hashes are created.

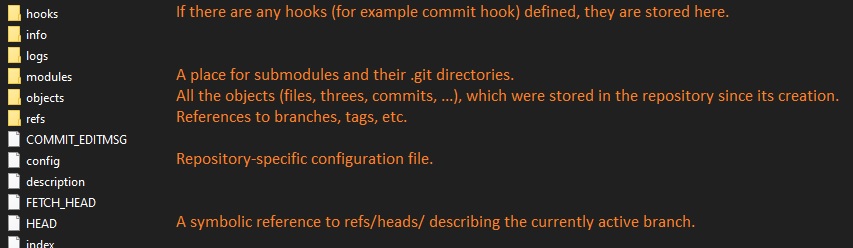

Git’s directory structure

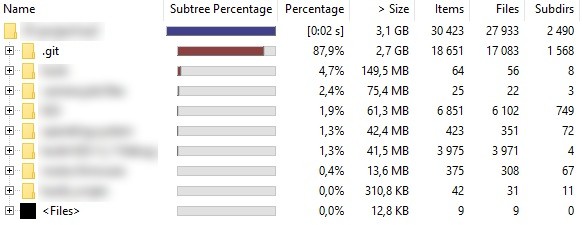

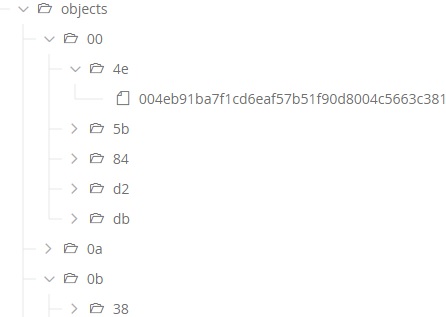

There was explained a lot of hashes until now, but it is still not enough to explain the problems from the examples below. In Figure 3 you can see the structure of the normally hidden .git directory. There are descriptions of more significant objects in it, but the most interesting for this article is the objects directory. If you want to know the details, check Git’s documentation page.

.git directory structureIf you go to any of your Git repositories and check the content of the .git/objects directory, you will see tens or maybe hundreds of directories (up to 258, including info and pack). Their names will have only 2 hexadecimal characters. These names are the first two characters of the hashes (yes, again) for objects located in them. Keep in mind that every file stored in the repository has its equivalent4 in the objects directory. Or actually, every version of every object has it.

That can explain why the biggest part of your repository is the .git directory. It stores not only a copy of the rest of all current content but because of…

Git is a free and open-source distributed version control system designed to handle everything from small to very large projects with speed and efficiency.

…Git’s distribution, it stores all files that ever exist in the repository. This can end with situations where it is even a few times bigger than the actual size of current data.

4 The word “equivalent” is truthfully valid only for binary files. Every change in them leads to keeping another full copy of the file. Git is smart enough to keep only differences for text files.

Another short digression

Usually, Git users become familiar with all the above when they make a mistake and lose their chances for the first time. Removal of the only copy of the local branch, a wrong hard reset or an unsuccessful rebase can lead to a situation when the required commits are no longer visible in the log. But they were not deleted (at least not yet5).

So here is a place for another short digression which is again not an obvious possibility. Using git reflog you can find deleted commits and branches, using git log and git show you can find deleted files and using git checkout <hash> or git cherry-pick <hash> you can restore them. The Internet is already full of step-by-step instructions for this. Just do not panic, everything is still over there.

5 There are cleanup and optimization mechanisms implemented in Git. So, the deleted and unreachable from the tree elements are not stored in the local copy of the repository forever. There are configurable periods for cleaning set in the reflog. See the documentation for the details.

An everyday example… of rewriting history

The Version Control Systems are in general designed to track the history. They keep what was changed, when, and who did it. Their biggest value is that it is fully reversible. Therefore, the last thing that you will expect of them is that history can be changed. Because the rest of this article will be about changing and rewriting history, I should probably start with a basic example of why SOMETIMES it makes sense.

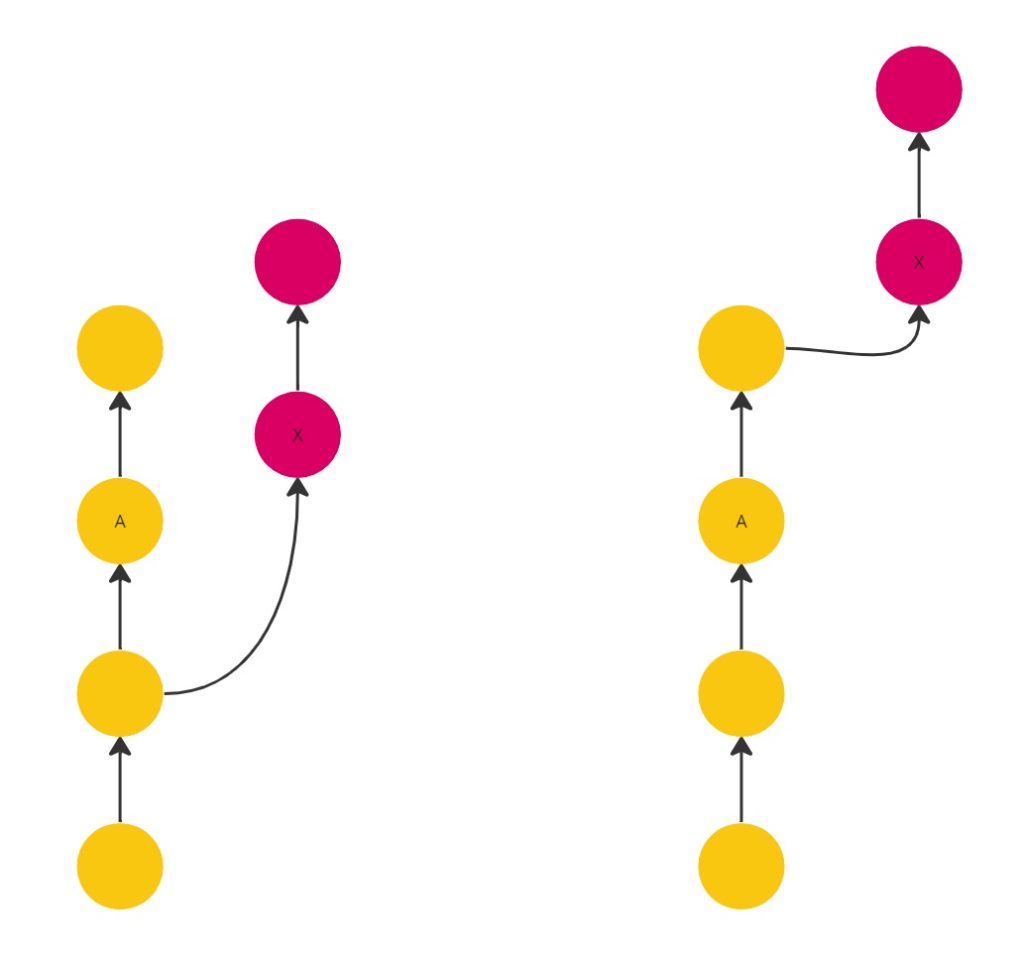

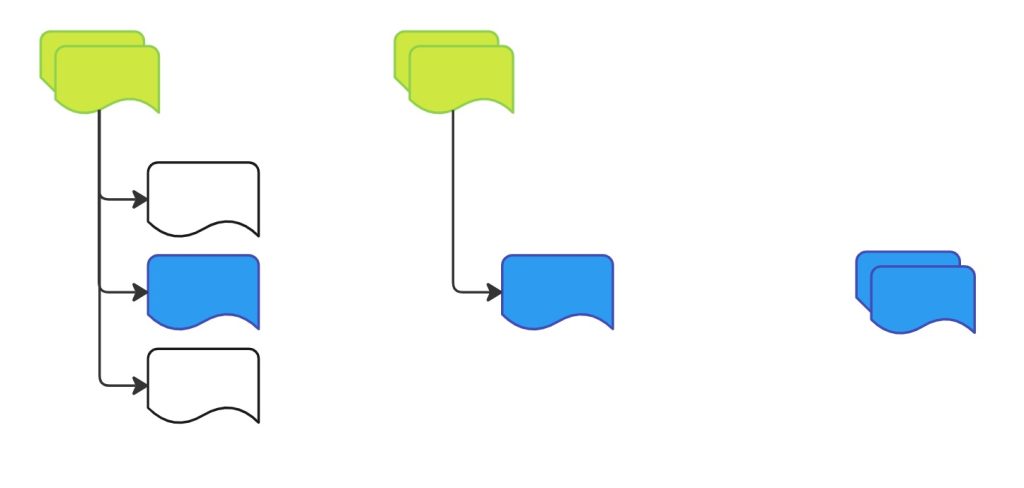

In Git repository maintenance there are two schools: merges and rebases. Merges create an additional commit to merge the changes and provide a multiline history, but they are not changing the history. Rebases do not require additional commits for merging and provide a linear history but they require changing the history.

You could say: ”Wait, what do you mean by the rewriting? I am not changing anything. I am only moving my commits from one base to another.” And that is enough. Making a rebase like this removes previous commits and creates new ones with similar content. It is similar and not the same (even if files, commit message, and author are still the same) because the parent has been changed. That leads to creating “the same” commit with a new hash value.

So, this is how a commit can “change” its hash. One more thing that will also change in this case is the delivery date (this will be updated), which will no longer be equal to the creation date (this will remain original). And what if commits A and X (marked in Figure 5) change the same line of the same file? You will need to resolve the conflict. Then, the “new” X will definitely not be the same “previous” X.

Similar things are happening whenever you want to squash your commits, amend something to another or change the commits order with an interactive rebase. All these operations are changing history. We are changing the Git history daily, even if we use it to keep the history not changeable.

An unusual example… but still not so rare

Now, let us start real fun. Here, I want to show you an example, which will be a base for the rest of this article. Imagine a repository where someone a few years ago introduced some big files. Let them be binary, and for some simplification make them all have the same extension. To make it literal: someone introduced a few big zip archives which were changed only from time to time.

In general, Git is not the best choice for files like this, there are other VCS that should be considered if binary files are more common to you. It is worth mentioning HelixCore and Unity DevOps (previously Plastic SCM) here. If you are using Git, try to consider not keeping binary files in it. It is supported so there is no problem to do it. However, just because something is possible it does not mean that you should do it.

Going back to the example, assume that these files are no longer needed, and we can remove them. That is great news, git rm *.zip && git commit -m “remove archives” && git push, and we are done. Are we? It depends…

If you do not need the files now, but you need to keep their history then the answer is yes. But if you are not interested in these files at all, you want to forget about them like they never exist then no. Independently, you may also be interested in why you need to clone a 5 GB repository every time, while its current size is around 500 MB. If you want to solve this issue you are also not done yet.

extension-based solution

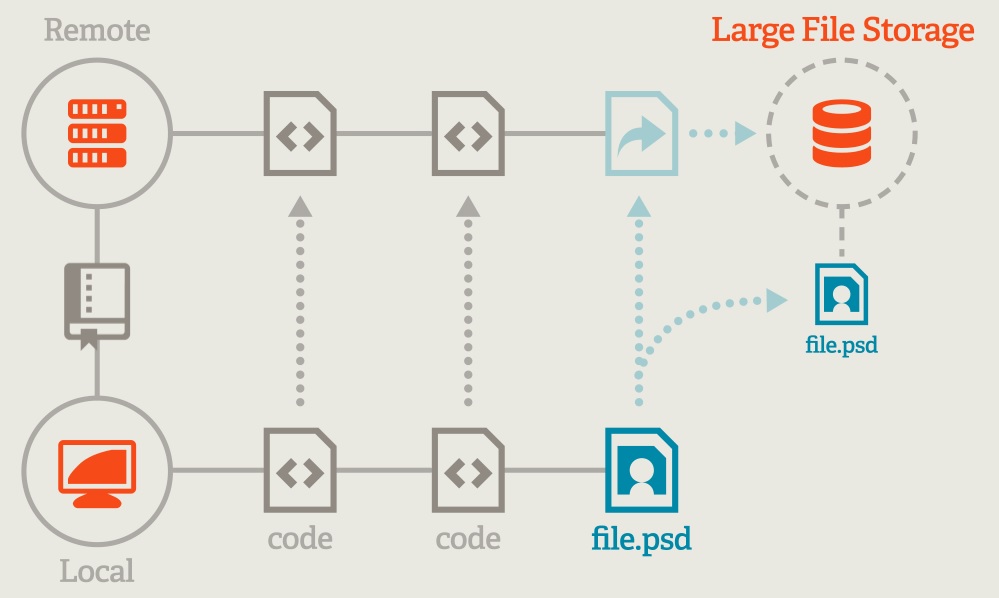

If you do not want to keep big files in your repository no more, there are at least a few options worth considering. One of them is Git Large File Storage (LFS). The idea of this open-source extension is well described in Figure 6 from the official webpage.

Every local code change goes to the remote as a code change. However, when a large file is introduced locally, it becomes to be a reference to LFS on remote, and the file itself is stored over there. Great, but how it works when I already have large files in the repository?

There is a command prepared for it: git lfs migrate which will do the job. You can choose what you mean by “large files”, it can be every file bigger than a set value, or you can set it by file extension (but you cannot set the condition to some extension only when is bigger than some value). By adding the following options import --everything --include="*.zip” to that command, we can solve the issue from the previous section. All files will be replaced with references through the history of the repository.

The files will be transferred to the external location (usually located next to the repository but can be configured to any other server). The repository size will effectively decrease. The migration will act like files were never added to Git and were always handled by LFS. This is a great option for different scenarios:

- You do not need large files anymore, and you want to shrink repository size, but you need to keep a history of them.

- You do not need the archives daily. You want to speed up cloning time and decrease network transfers. With LFS you can control at any time do you want to download large files or do you want to skip them at this time.

- Your repository is just too big to fit the hosting requirements. You need to decrease its size, but you do not want to lose anything from history.

If it is so great, then why is it not standard everywhere and for everybody? Different solutions have different needs. Sometimes you need a spaceship and sometimes only a paper plane. LFS introduces additional effort. Everybody who uses the repository has to install the extension locally. Everybody has to learn how to configure and use it. The first configuration (including new storage) has to be done by somebody. Even if it is all relatively simple then it is still an additional effort that you may not want. And finally, the biggest disadvantage, which is also a core topic here: LFS migration rewrites the history of the repository.

It causes that every single commit based on the first which introduces a large file will have a new hash. If the repository is widely used by many, many people then migration like this will be hard to do. Everybody will have to rebase their local changes to a completely new tree. It will look the same, but it will not correlate with the previous.

At the end of this topic one more fun fact about the LFS file storing. Underneath, it uses… hashes. You can see the organization of directories in Figure 7. It is like what Git does, but this time the objects directory has two levels of directories, and the file at the end has its full hash in its name (it is not reduced at the beginning by the letters already visible in the directories’ names).

Tool-based workaround

I am not sure that the word workaround fits best here. As already said: different solutions have different needs. Maybe this will be already a solution for somebody. It follows a KISS principle. The LFS can transfer out files that we do not want in the repository. But what if we do not want these files at all? What if we do not want to extract them, but forget about them, like they never exist? Maybe someone at some point, someone just fired git add -A and did not notice he added too much. Files were reverted two weeks later, but they are still reachable because of the Git history and the repository becomes unnecessarily large.

In this scenario, we need to get the commit that introduces the issue, revert problematic files, amend the changes, and replace the original one like it never exists. Now here comes the fun part: it was a few years ago and there are several branches and commits over it. Everything has to be rebased on the fixed commit. Fortunately, there are tools for it that automate this process.

In Git 1.5.3 was introduced a git-filter-branch tool. However, it is worth mentioning only if you use Git below 2.22. Otherwise, try to act like you never heard about it. Filter-branch has safety and performance issues. Currently, it is not recommended even with the official Git documentation. Why is it still there? It is probably because of backward compatibility and probably because its successor filter-repo is still an extension package and not a part of the Git core package.

Ok, enough talking, a little more action:

git filter-repo --invert-paths --path-glob “*.zip”

And that is it! All zip files across the whole repository were removed and everything based on those changes was automatically rebased. Lines like the above can be found on the filter-repo manual page, there are multiple useful examples.

Another not obvious solution

This time we need to define our original problem a little bit differently. Still, we have big zip archives which we want to exclude from the repository, but this time at least they all are in one directory (actually, nobody said before it was not like this).

In the same (as for filter-branch) version 1.5.3 of Git, one more feature was added: submodules. A submodule can be (and is) a standalone, self-sufficient repository. It has its own .git directory and structure like every other repository. Every Git repository can become to be a submodule for another.

The host repository’s directory structure is a little bit mixed compared to natural expectations. In fact, when you open the directory which is meant to be a submodule you will see all files. But the .git directory of the included repository will be stored as .git/modules/<submodule_name>. However, what is most important, each time you need to clone or update the repository you can decide whether you care about that submodule or not. Just like with the LFS, you do not have to download whole content every time.

In general, the procedure of creating a submodule from an existing directory has two parts. The first is visible in Figure 8. It is about creating a new repository from an existing directory. It will preserve its history and commits.

To achieve this, you need to have an additional, fresh copy of your repository and run a command:

git filter-repo --subdirectory-filter <path_to_directory>

This will remove all other paths and all history that is not related to the given path. Then, the given path will become a new root directory. Everything is prepared, now you need to push it as a new repository:

git remote add origin [email protected]:project/subrepository

git push --set-upstream origin master

The second part of this procedure is visible in Figure 9.

In this part, there are two possibilities for the first step. It is about removing the existing directory in the original repository. You can go with a simple git rm -r <path_to_directory> but this will not remove the files from the objects directory. They will be reachable at any time for older commits. You can do it in a more sophisticated way as well:

git filter-repo --path <path_to_directory>/ --invert-paths

A line like this will remove the given path, but this time it will be removed through all of history. In this way, the original repository becomes effectively smaller, and we will keep the history of extracted items in a newly created repository. The last thing that has to be done is the addition of a submodule.

git submodule add [email protected]:project/subrepository <path_to_directory>

git submodule init –update

git commit -m "Submodule introduced”

git push

Making it as if it has always been like this

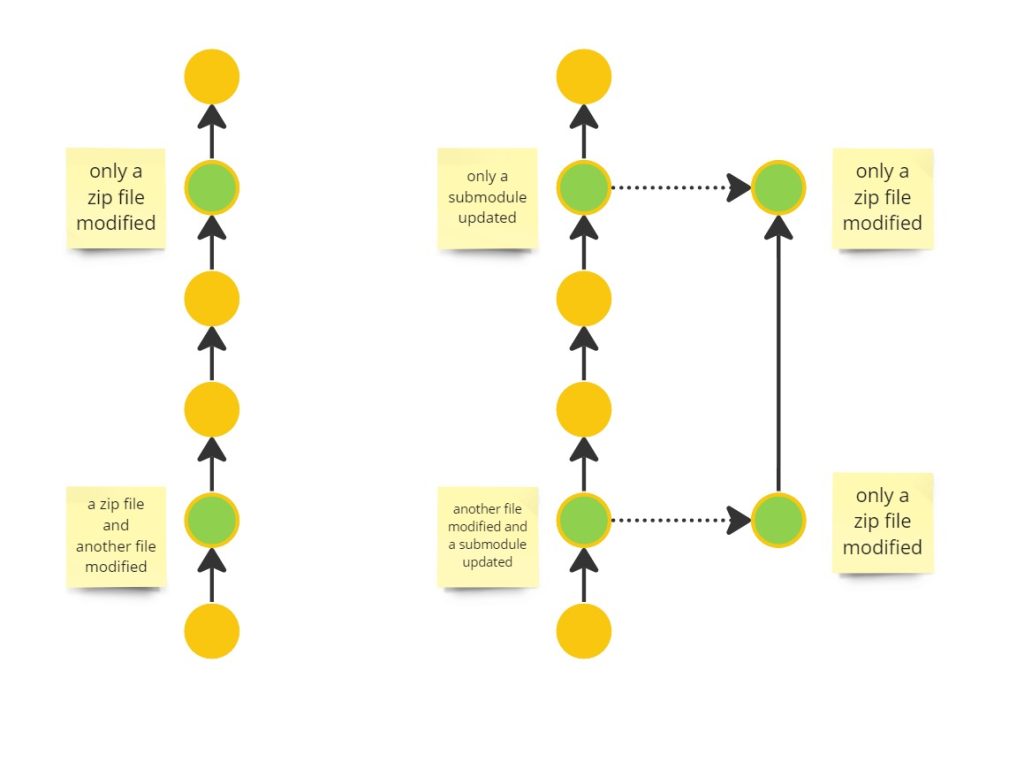

The above solution is quite good if you care about the files but do not want to keep them in the main repository. But it is still not perfect when you care about persevering history (you probably should). Why is it like this?

After all, we have a history of extracted objects, so what is wrong? There were two options in the second part. The first is keeping the objects and their history in the main repository before the directory was replaced with a submodule. The second is removing both, decreasing the repository size. And why not have them both? History of transferred objects, related to other changes in the main repository, but without the objects?

So, what we want to achieve is like this: any time the original object was changed in the main repository, there will be a reference to extracted submodule, directly pointing to this change. In other words, we want to make the original repository look like the submodule was always there, and each time somebody changed the object in it, make the submodule update in the main repository as well, to provide a complete set of changes in both repositories. This flow is presented in Figure 10.

To achieve this goal additional preparation and actions have to be performed in comparison to previous instruction. First of all, this is a place where I should mention the callbacks. They are an open gate to introduce your own extensions to the filter-repo extension. Any time you feel that no option satisfies your needs in filter-repo you should remember that you can create it on your own. All you need to do is to write some Python code or use one of the already prepared examples.

Did someone realize that you should have, for example, a license file added in the first commit? Have you got now a .gitignore file, but previously there were files added matching it and now you want to vanish them? No problem, humanity has ready solutions. Quoting the part of the project’s readme:

While most users will probably just use filter-repo as a simple command line tool (and likely only use a few of its flags), at its core filter-repo contains a library for creating history rewriting tools. As such, users with specialized needs can leverage it to quickly create entirely new history rewriting tools.

Step-by-step instruction

The step-by-step instruction on achieving the effect from Figure 10 might become another article not shorter than this one. But in general, the following steps have to be performed:

- You need to create a handle for each commit to be unambiguously matched between future repositories even if the commits’ hashes will vary. It might be an original hash added to the commit message with some reference marker (to not mix it up with hashes already visible in the commit messages).

- The first step will already rewrite the whole repository (every single commit has to be updated), you need to store it somewhere separately from the origin. This will act as a main in the next steps.

- Extract the required submodule from a copy of the main exactly like it was described in the “Another not obvious solution” section and push it to the remote.

- Go back to the main repository and rename (not delete) the source directory through all the history. This is temporary. It will leave the information when the submodule reference has to be updated. It will also make a place for a submodule to be placed where it should be.

- Create a submodule on the very first commit of the repository on the original directory location.

- Go through the changes and see when the renamed directory was changing.

- Using the original hash stored in the commit message find a corresponding commit in the extracted repository.

- Update the submodule with a found commit’s current hash.

- Repeat steps 6-8 until all commits are processed.

- Remove renamed directory and all its history.

It is even hard to calculate how many times the history of the repository will be rewritten in this process. But it guarantees that all the goals will be achieved, and you will not lose anything from your history. And what if you have to do it for more than one directory to be extracted? What if you need additionally to turn on the LFS support for some of the extracted submodules?

In such a case you will end up building a spaceship like we built in one of our projects…

Final thoughts

Usually, nobody expects that the history of the repository will change. I hope that I have made you at least a little bit convinced that sometimes it should. Git, its tools, and its extensions give great power, which of course, has to be used with great responsibility. When you do some local rebases, squashes, or amends nobody will claim that you provide mendacity. If there are some organizational changes required it might be painful, but once it is performed with care, it ends up only with another structure.

Finally, you can use these possibilities to pretend that something is different than it really was. No tool will protect you against issues like this. Fortunately, there are always people that are using these tools and they are the last line of defense. And even if Git allows you to do the things that you should not do it is also distributed, therefore, everybody has their own truth.

Leave a comment